Towards Efficient Convolutional Network Models with Filter Distribution Templates

Paper and Code

Apr 17, 2021

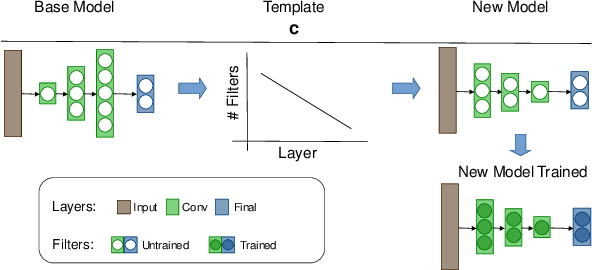

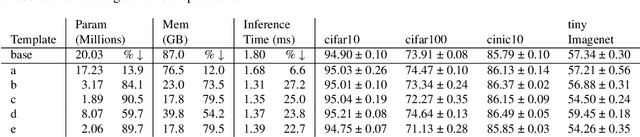

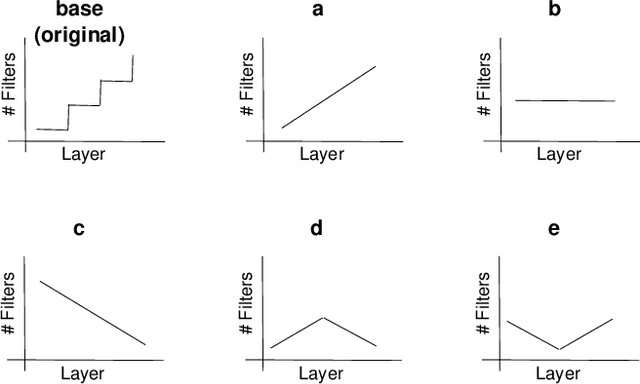

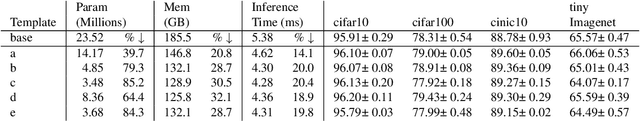

Increasing number of filters in deeper layers when feature maps are decreased is a widely adopted pattern in convolutional network design. It can be found in classical CNN architectures and in automatic discovered models. Even CNS methods commonly explore a selection of multipliers derived from this pyramidal pattern. We defy this practice by introducing a small set of templates consisting of easy to implement, intuitive and aggressive variations of the original pyramidal distribution of filters in VGG and ResNet architectures. Experiments on CIFAR, CINIC10 and TinyImagenet datasets show that models produced by our templates, are more efficient in terms of fewer parameters and memory needs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge