Towards Deep Machine Reasoning: a Prototype-based Deep Neural Network with Decision Tree Inference

Paper and Code

Feb 02, 2020

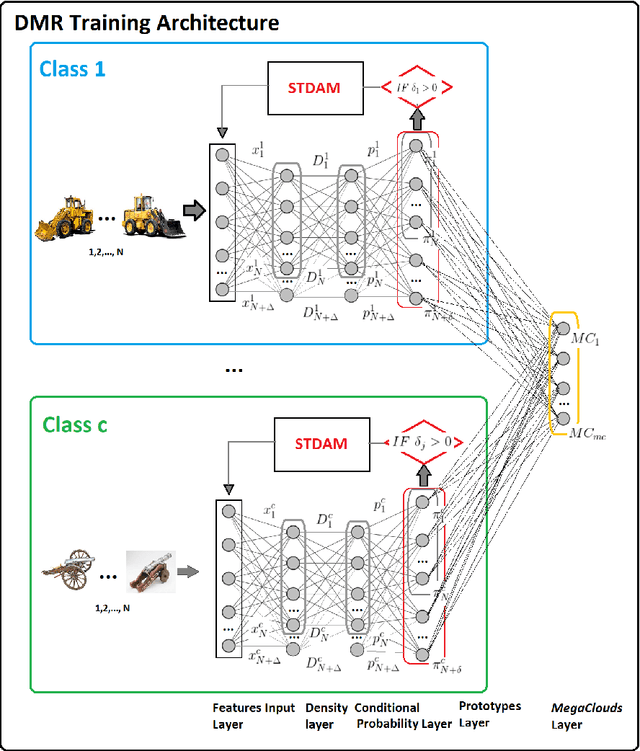

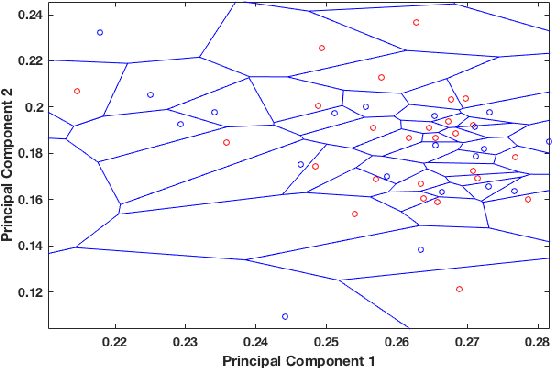

In this paper we introduce the DMR -- a prototype-based method and network architecture for deep learning which is using a decision tree (DT)-based inference and synthetic data to balance the classes. It builds upon the recently introduced xDNN method addressing more complex multi-class problems, specifically when classes are highly imbalanced. DMR moves away from a direct decision based on all classes towards a layered DT of pair-wise class comparisons. In addition, it forces the prototypes to be balanced between classes regardless of possible class imbalances of the training data. It has two novel mechanisms, namely i) using a DT to determine the winning class label, and ii) balancing the classes by synthesizing data around the prototypes determined from the available training data. As a result, we improved significantly the performance of the resulting fully explainable DNN as evidenced by the best reported result on the well know benchmark problem Caltech-101 surpassing our own recently published "world record". Furthermore, we also achieved another "world record" for another very hard benchmark problem, namely Caltech-256 as well as surpassed the results of other approaches on Faces-1999 problem. In summary, we propose a new approach specifically advantageous for imbalanced multi-class problems that achieved two world records on well known hard benchmark problems and the best result on another problem in terms of accuracy. Moreover, DMR offers full explainability, does not require GPUs and can continue to learn from new data by adding new prototypes preserving the previous ones but not requiring full retraining.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge