Toward 6-DOF Autonomous Underwater Vehicle Energy-Aware Position Control based on Deep Reinforcement Learning: Preliminary Results

Paper and Code

Feb 25, 2025

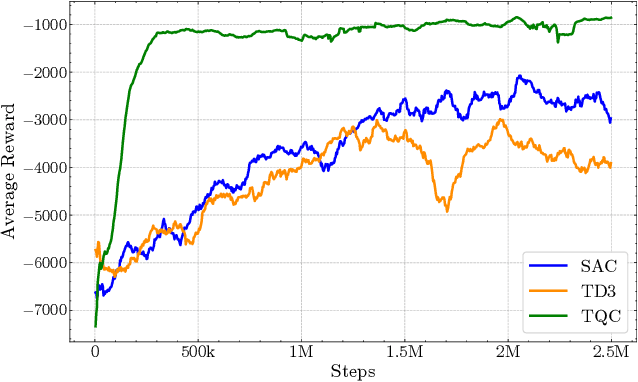

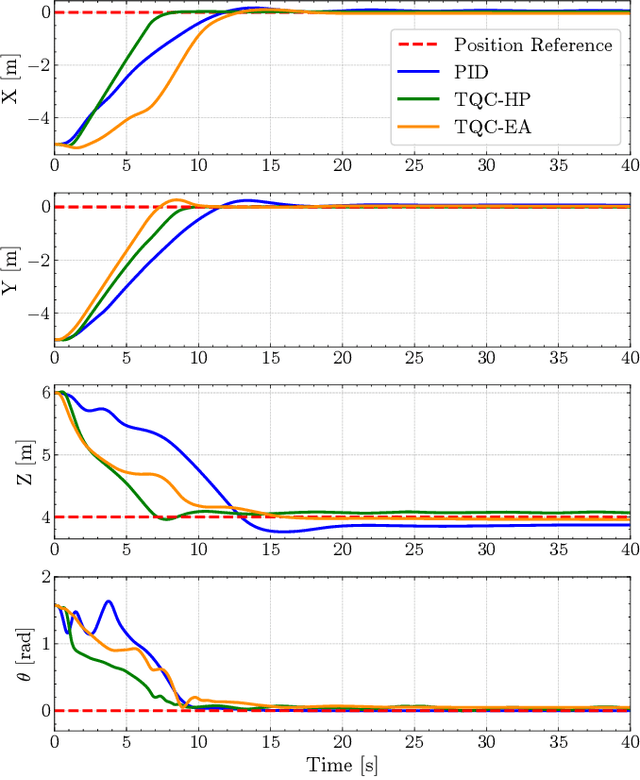

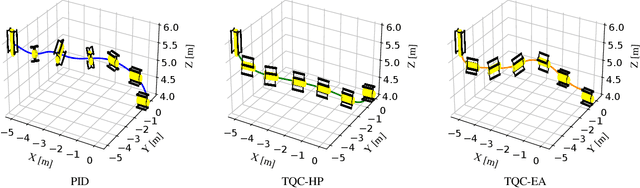

The use of autonomous underwater vehicles (AUVs) for surveying, mapping, and inspecting unexplored underwater areas plays a crucial role, where maneuverability and power efficiency are key factors for extending the use of these platforms, making six degrees of freedom (6-DOF) holonomic platforms essential tools. Although Proportional-Integral-Derivative (PID) and Model Predictive Control controllers are widely used in these applications, they often require accurate system knowledge, struggle with repeatability when facing payload or configuration changes, and can be time-consuming to fine-tune. While more advanced methods based on Deep Reinforcement Learning (DRL) have been proposed, they are typically limited to operating in fewer degrees of freedom. This paper proposes a novel DRL-based approach for controlling holonomic 6-DOF AUVs using the Truncated Quantile Critics (TQC) algorithm, which does not require manual tuning and directly feeds commands to the thrusters without prior knowledge of their configuration. Furthermore, it incorporates power consumption directly into the reward function. Simulation results show that the TQC High-Performance method achieves better performance to a fine-tuned PID controller when reaching a goal point, while the TQC Energy-Aware method demonstrates slightly lower performance but consumes 30% less power on average.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge