Theoretical Foundations of Equitability and the Maximal Information Coefficient

Paper and Code

May 12, 2015

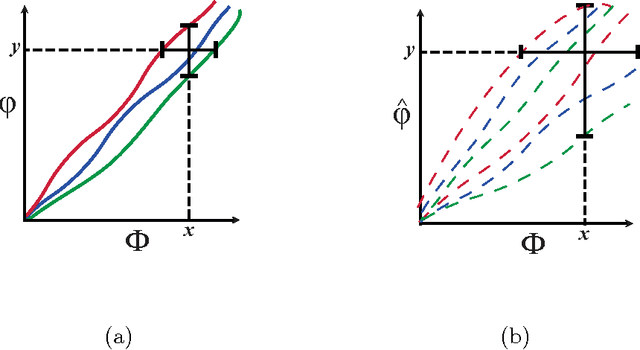

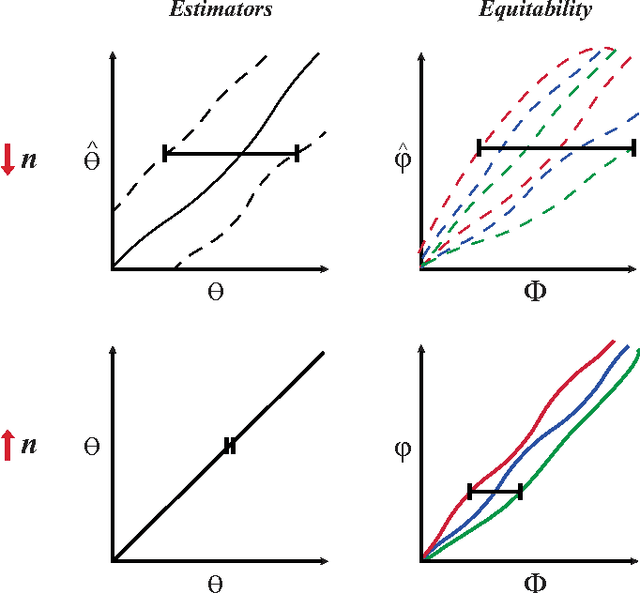

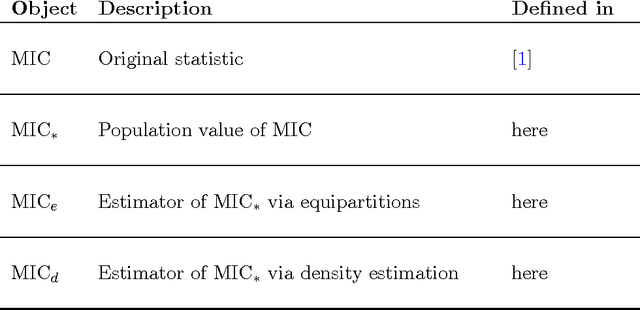

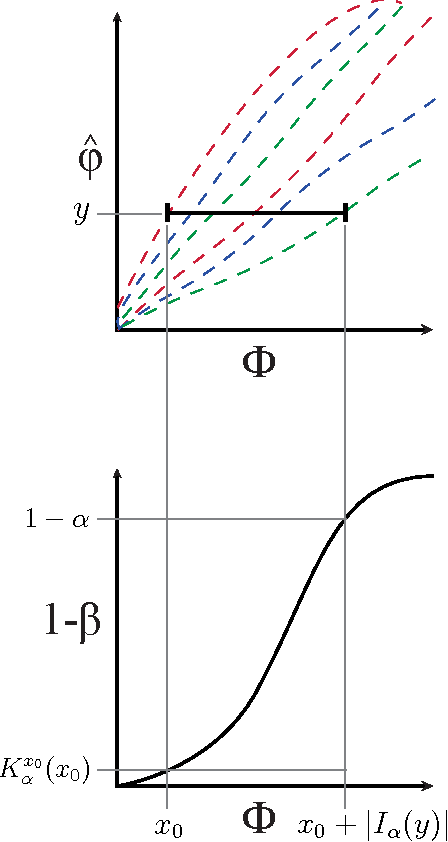

The maximal information coefficient (MIC) is a tool for finding the strongest pairwise relationships in a data set with many variables (Reshef et al., 2011). MIC is useful because it gives similar scores to equally noisy relationships of different types. This property, called {\em equitability}, is important for analyzing high-dimensional data sets. Here we formalize the theory behind both equitability and MIC in the language of estimation theory. This formalization has a number of advantages. First, it allows us to show that equitability is a generalization of power against statistical independence. Second, it allows us to compute and discuss the population value of MIC, which we call MIC_*. In doing so we generalize and strengthen the mathematical results proven in Reshef et al. (2011) and clarify the relationship between MIC and mutual information. Introducing MIC_* also enables us to reason about the properties of MIC more abstractly: for instance, we show that MIC_* is continuous and that there is a sense in which it is a canonical "smoothing" of mutual information. We also prove an alternate, equivalent characterization of MIC_* that we use to state new estimators of it as well as an algorithm for explicitly computing it when the joint probability density function of a pair of random variables is known. Our hope is that this paper provides a richer theoretical foundation for MIC and equitability going forward. This paper will be accompanied by a forthcoming companion paper that performs extensive empirical analysis and comparison to other methods and discusses the practical aspects of both equitability and the use of MIC and its related statistics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge