The Uncanny Valley: A Comprehensive Analysis of Diffusion Models

Paper and Code

Feb 20, 2024

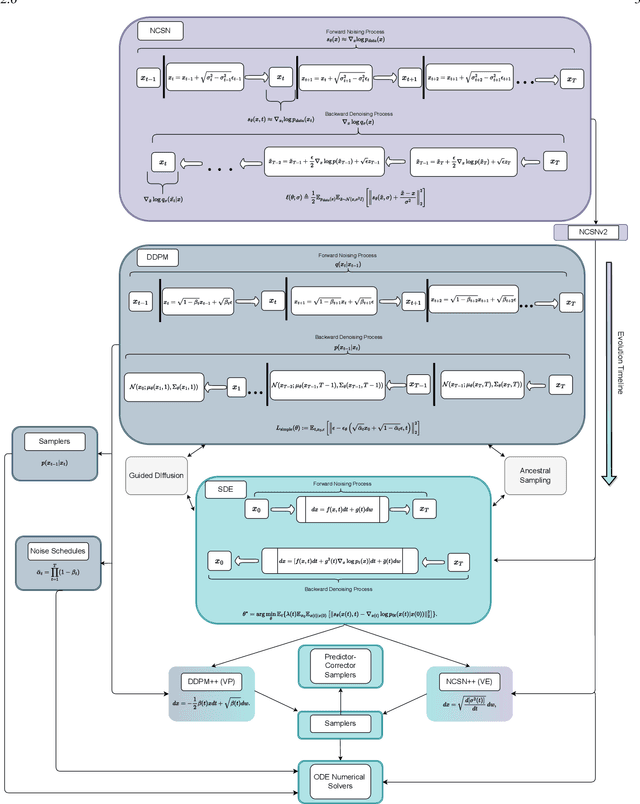

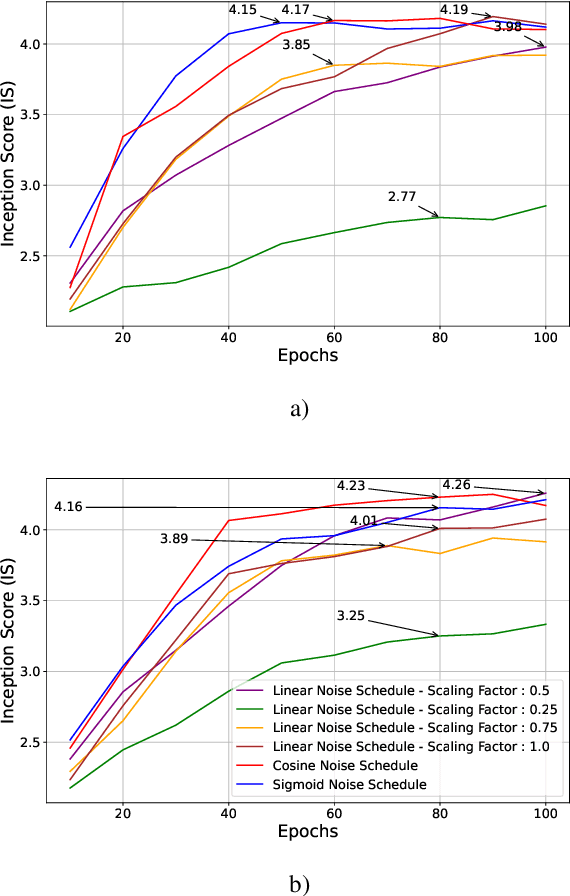

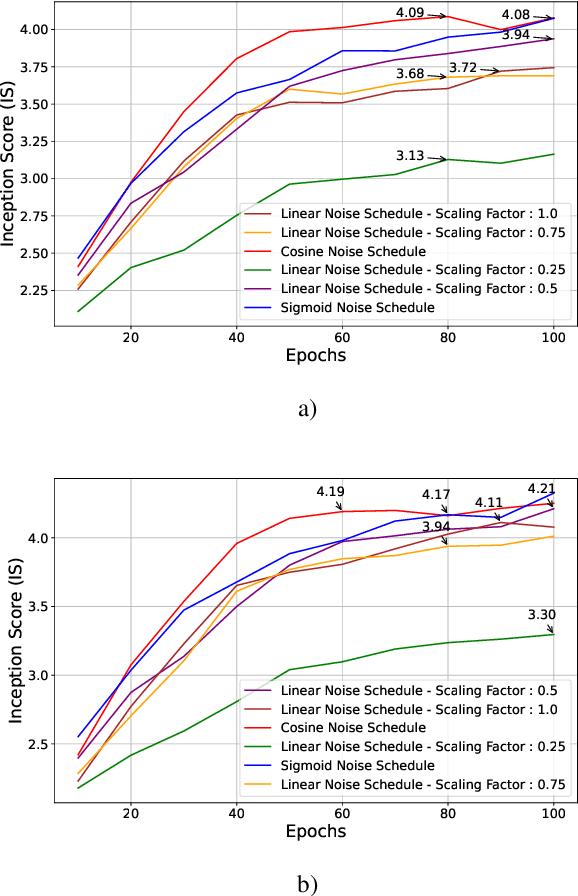

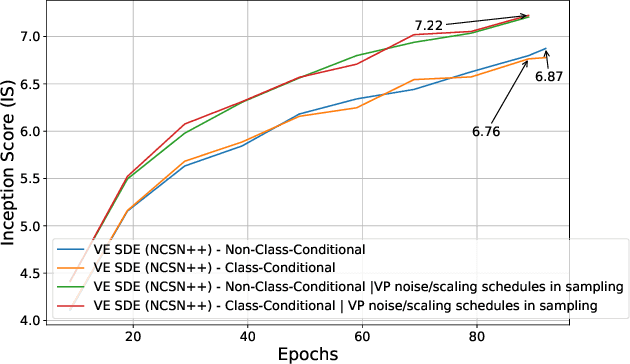

Through Diffusion Models (DMs), we have made significant advances in generating high-quality images. Our exploration of these models delves deeply into their core operational principles by systematically investigating key aspects across various DM architectures: i) noise schedules, ii) samplers, and iii) guidance. Our comprehensive examination of these models sheds light on their hidden fundamental mechanisms, revealing the concealed foundational elements that are essential for their effectiveness. Our analyses emphasize the hidden key factors that determine model performance, offering insights that contribute to the advancement of DMs. Past findings show that the configuration of noise schedules, samplers, and guidance is vital to the quality of generated images; however, models reach a stable level of quality across different configurations at a remarkably similar point, revealing that the decisive factors for optimal performance predominantly reside in the diffusion process dynamics and the structural design of the model's network, rather than the specifics of configuration details. Our comparative analysis reveals that Denoising Diffusion Probabilistic Model (DDPM)-based diffusion dynamics consistently outperform the Noise Conditioned Score Network (NCSN)-based ones, not only when evaluated in their original forms but also when continuous through Stochastic Differential Equation (SDE)-based implementations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge