TARS: Traffic-Aware Radar Scene Flow Estimation

Paper and Code

Mar 13, 2025

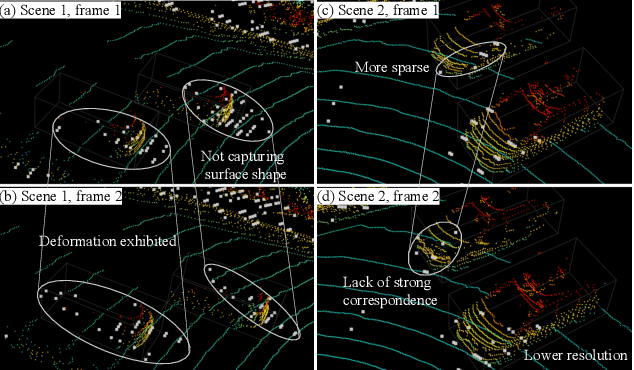

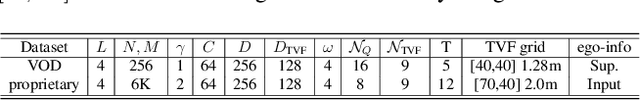

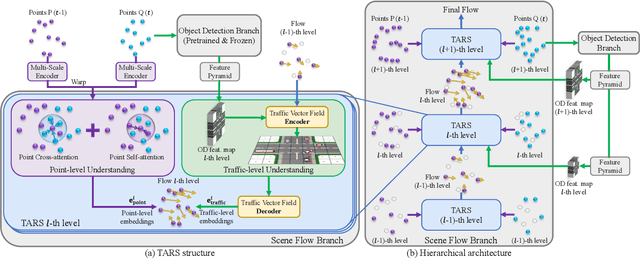

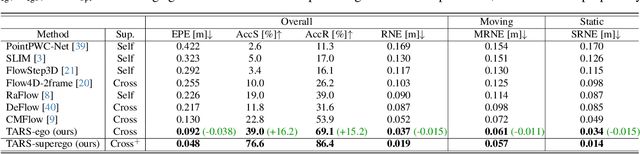

Scene flow provides crucial motion information for autonomous driving. Recent LiDAR scene flow models utilize the rigid-motion assumption at the instance level, assuming objects are rigid bodies. However, these instance-level methods are not suitable for sparse radar point clouds. In this work, we present a novel $\textbf{T}$raffic-$\textbf{A}$ware $\textbf{R}$adar $\textbf{S}$cene flow estimation method, named $\textbf{TARS}$, which utilizes the motion rigidity at the traffic level. To address the challenges in radar scene flow, we perform object detection and scene flow jointly and boost the latter. We incorporate the feature map from the object detector, trained with detection losses, to make radar scene flow aware of the environment and road users. Therefrom, we construct a Traffic Vector Field (TVF) in the feature space, enabling a holistic traffic-level scene understanding in our scene flow branch. When estimating the scene flow, we consider both point-level motion cues from point neighbors and traffic-level consistency of rigid motion within the space. TARS outperforms the state of the art on a proprietary dataset and the View-of-Delft dataset, improving the benchmarks by 23% and 15%, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge