Synapse Compression for Event-Based Convolutional-Neural-Network Accelerators

Paper and Code

Dec 25, 2021

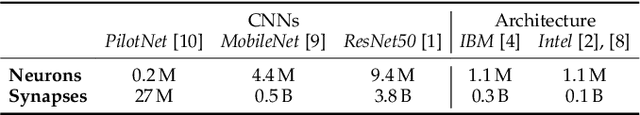

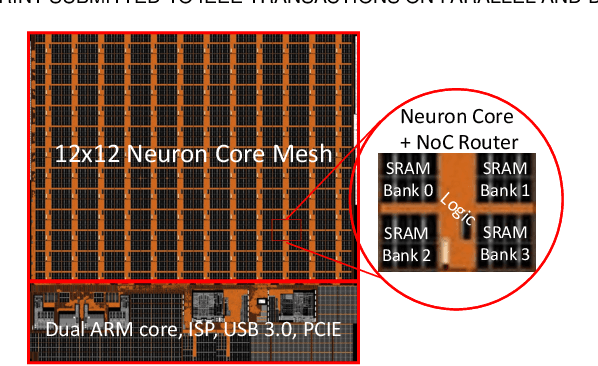

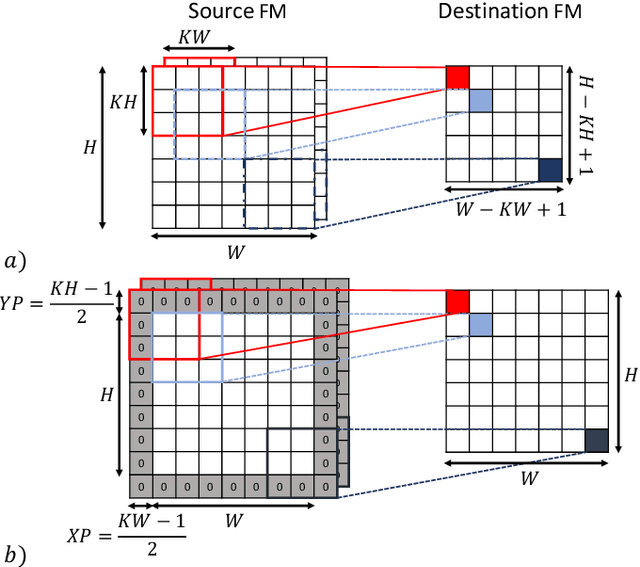

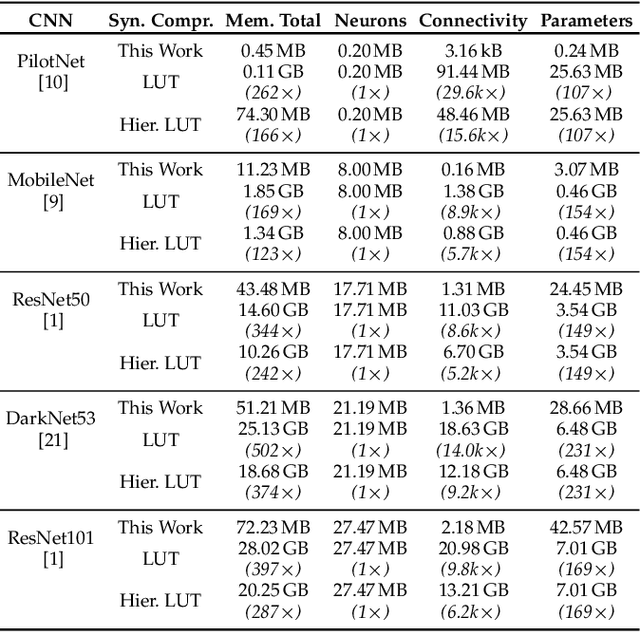

Manufacturing-viable neuromorphic chips require novel computer architectures to achieve the massively parallel and efficient information processing the brain supports so effortlessly. Emerging event-based architectures are making this dream a reality. However, the large memory requirements for synaptic connectivity are a showstopper for the execution of modern convolutional neural networks (CNNs) on massively parallel, event-based (spiking) architectures. This work overcomes this roadblock by contributing a lightweight hardware scheme to compress the synaptic memory requirements by several thousand times, enabling the execution of complex CNNs on a single chip of small form factor. A silicon implementation in a 12-nm technology shows that the technique increases the system's implementation cost by only 2%, despite achieving a total memory-footprint reduction of up to 374x compared to the best previously published technique.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge