Supervised Learning with Projected Entangled Pair States

Paper and Code

Sep 12, 2020

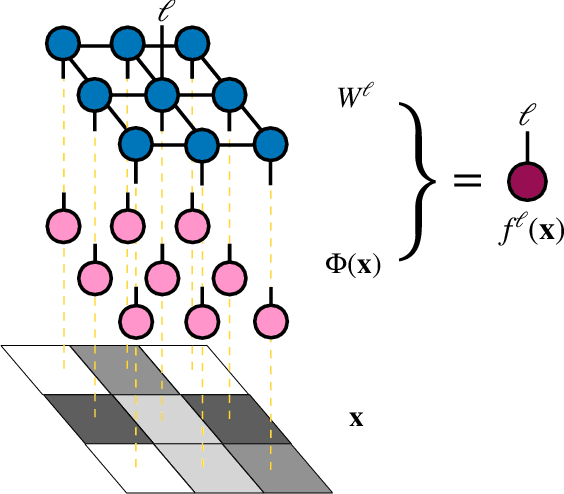

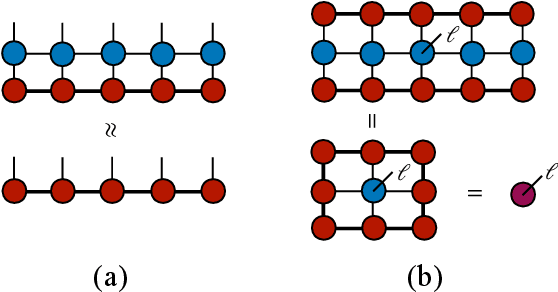

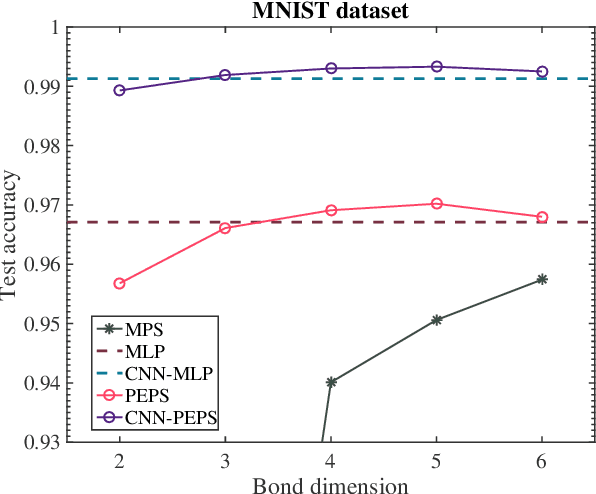

Tensor networks, a model that originated from quantum physics, has been gradually generalized as efficient models in machine learning in recent years. However, in order to achieve exact contraction, only tree-like tensor networks such as the matrix product states and tree tensor networks have been considered, even for modeling two-dimensional data such as images. In this work, we construct supervised learning models for images using the projected entangled pair states (PEPS), a two-dimensional tensor network having a similar structure prior to natural images. Our approach first performs a feature map, which transforms the image data to a product state on a grid, then contracts the product state to a PEPS with trainable parameters to predict image labels. The tensor elements of PEPS are trained by minimizing differences between training labels and predicted labels. The proposed model is evaluated on image classifications using the MNIST and the Fashion-MNIST datasets. We show that our model is significantly superior to existing models using tree-like tensor networks. Moreover, using the same input features, our method performs as well as the multilayer perceptron classifier, but with much fewer parameters and is more stable. Our results shed light on potential applications of two-dimensional tensor network models in machine learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge