Supervised and Unsupervised End-to-End Deep Learning for Gene Ontology Classification of Neural In Situ Hybridization Images

Paper and Code

Nov 30, 2019

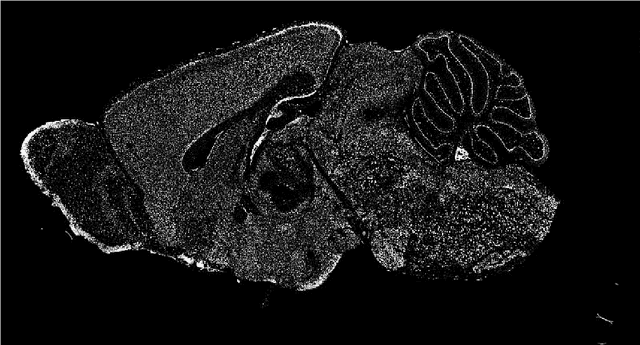

In recent years, large datasets of high-resolution mammalian neural images have become available, which has prompted active research on the analysis of gene expression data. Traditional image processing methods are typically applied for learning functional representations of genes, based on their expressions in these brain images. In this paper, we describe a novel end-to-end deep learning-based method for generating compact representations of in situ hybridization (ISH) images, which are invariant-to-translation. In contrast to traditional image processing methods, our method relies, instead, on deep convolutional denoising autoencoders (CDAE) for processing raw pixel inputs, and generating the desired compact image representations. We provide an in-depth description of our deep learning-based approach, and present extensive experimental results, demonstrating that representations extracted by CDAE can help learn features of functional gene ontology categories for their classification in a highly accurate manner. Our methods improve the previous state-of-the-art classification rate (Liscovitch, et al.) from an average AUC of 0.92 to 0.997, i.e., it achieves 96% reduction in error rate. Furthermore, the representation vectors generated due to our method are more compact in comparison to previous state-of-the-art methods, allowing for a more efficient high-level representation of images. These results are obtained with significantly downsampled images in comparison to the original high-resolution ones, further underscoring the robustness of our proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge