Subsampled Fourier Ptychography using Pretrained Invertible and Untrained Network Priors

Paper and Code

May 13, 2020

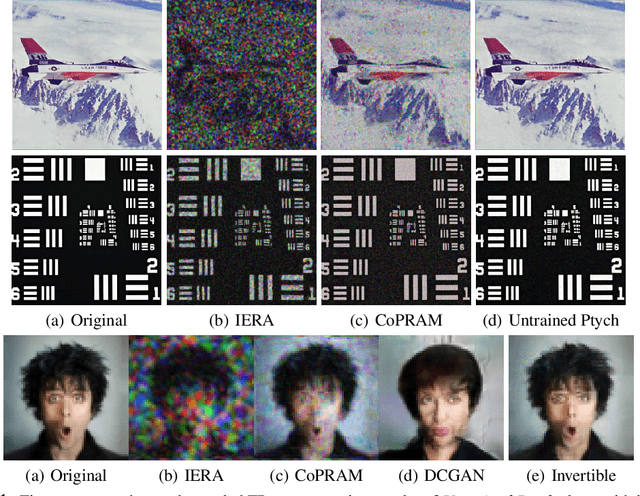

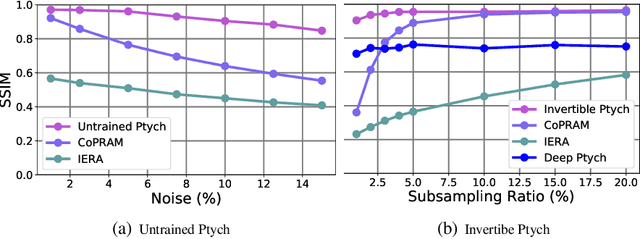

Recently pretrained generative models have shown promising results for subsampled Fourier Ptychography (FP) in terms of quality of reconstruction for extremely low sampling rate and high noise. However, one of the significant drawbacks of these pretrained generative priors is their limited representation capabilities. Moreover, training these generative models requires access to a large number of fully-observed clean samples of a particular class of images like faces or digits that is prohibitive to obtain in the context of FP. In this paper, we propose to leverage the power of pretrained invertible and untrained generative models to mitigate the representation error issue and requirement of a large number of example images (for training generative models) respectively. Through extensive experiments, we demonstrate the effectiveness of proposed approaches in the context of FP for low sampling rates and high noise levels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge