STURE: Spatial-Temporal Mutual Representation Learning for Robust Data Association in Online Multi-Object Tracking

Paper and Code

Jan 19, 2022

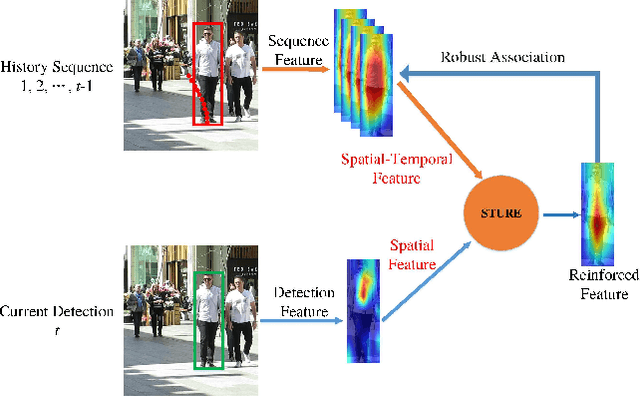

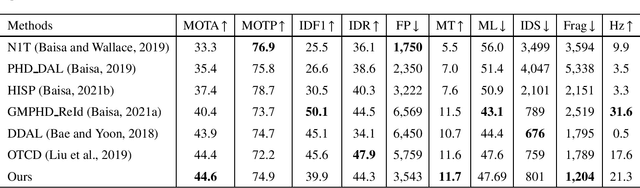

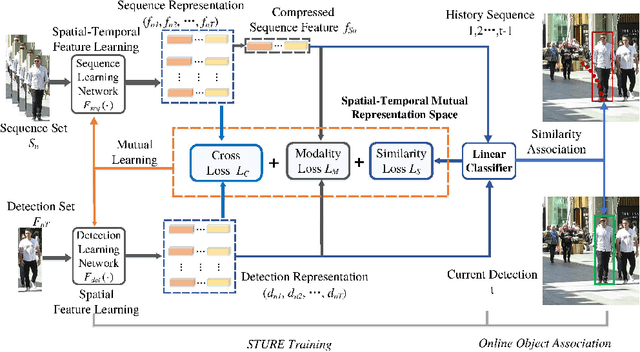

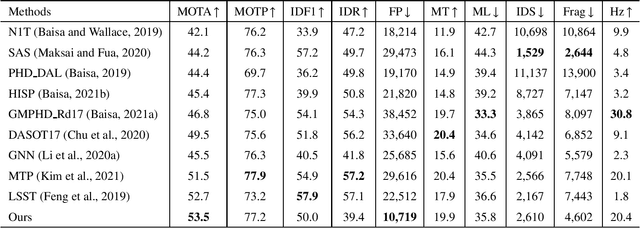

Online multi-object tracking (MOT) is a longstanding task for computer vision and intelligent vehicle platform. At present, the main paradigm is tracking-by-detection, and the main difficulty of this paradigm is how to associate the current candidate detection with the historical tracklets. However, in the MOT scenarios, each historical tracklet is composed of an object sequence, while each candidate detection is just a flat image, which lacks the temporal features of the object sequence. The feature difference between current candidate detection and historical tracklets makes the object association much harder. Therefore, we propose a Spatial-Temporal Mutual {Representation} Learning (STURE) approach which learns spatial-temporal representations between current candidate detection and historical sequence in a mutual representation space. For the historical trackelets, the detection learning network is forced to match the representations of sequence learning network in a mutual representation space. The proposed approach is capable of extracting more distinguishing detection and sequence representations by using various designed losses in object association. As a result, spatial-temporal feature is learned mutually to reinforce the current detection features, and the feature difference can be relieved. To prove the robustness of the STURE, it is applied to the public MOT challenge benchmarks and performs well compared with various state-of-the-art online MOT trackers based on identity-preserving metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge