Stochastic Weight Matrix-based Regularization Methods for Deep Neural Networks

Paper and Code

Sep 26, 2019

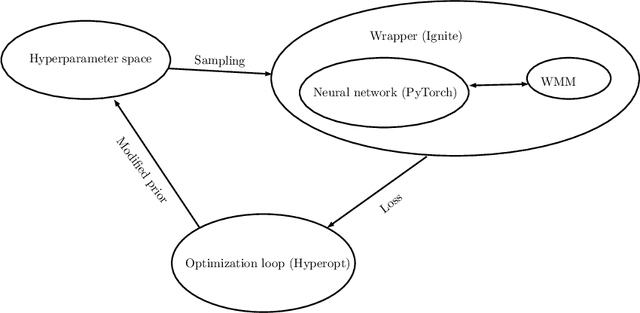

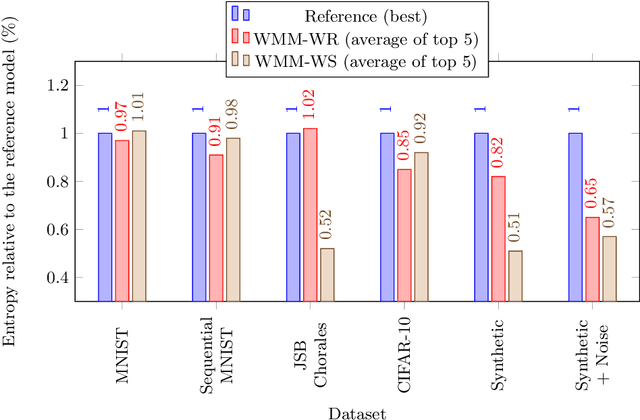

The aim of this paper is to introduce two widely applicable regularization methods based on the direct modification of weight matrices. The first method, Weight Reinitialization, utilizes a simplified Bayesian assumption with partially resetting a sparse subset of the parameters. The second one, Weight Shuffling, introduces an entropy- and weight distribution-invariant non-white noise to the parameters. The latter can also be interpreted as an ensemble approach. The proposed methods are evaluated on benchmark datasets, such as MNIST, CIFAR-10 or the JSB Chorales database, and also on time series modeling tasks. We report gains both regarding performance and entropy of the analyzed networks. We also made our code available as a GitHub repository (https://github.com/rpatrik96/lod-wmm-2019).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge