Stochastic Spiking Neural Networks with First-to-Spike Coding

Paper and Code

Apr 26, 2024

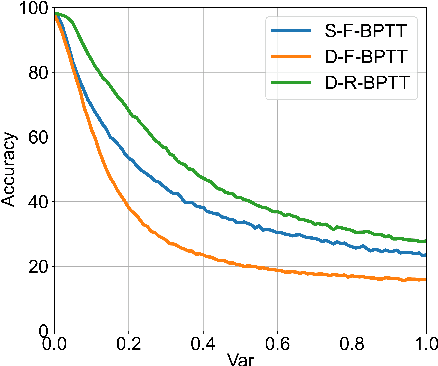

Spiking Neural Networks (SNNs), recognized as the third generation of neural networks, are known for their bio-plausibility and energy efficiency, especially when implemented on neuromorphic hardware. However, the majority of existing studies on SNNs have concentrated on deterministic neurons with rate coding, a method that incurs substantial computational overhead due to lengthy information integration times and fails to fully harness the brain's probabilistic inference capabilities and temporal dynamics. In this work, we explore the merger of novel computing and information encoding schemes in SNN architectures where we integrate stochastic spiking neuron models with temporal coding techniques. Through extensive benchmarking with other deterministic SNNs and rate-based coding, we investigate the tradeoffs of our proposal in terms of accuracy, inference latency, spiking sparsity, energy consumption, and robustness. Our work is the first to extend the scalability of direct training approaches of stochastic SNNs with temporal encoding to VGG architectures and beyond-MNIST datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge