Stochastic Modified Equations for Continuous Limit of Stochastic ADMM

Paper and Code

Mar 07, 2020

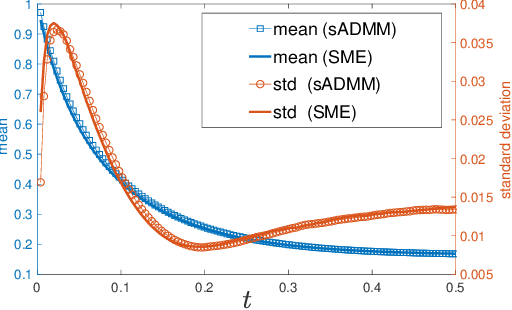

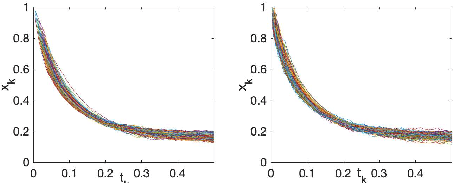

Stochastic version of alternating direction method of multiplier (ADMM) and its variants (linearized ADMM, gradient-based ADMM) plays a key role for modern large scale machine learning problems. One example is the regularized empirical risk minimization problem. In this work, we put different variants of stochastic ADMM into a unified form, which includes standard, linearized and gradient-based ADMM with relaxation, and study their dynamics via a continuous-time model approach. We adapt the mathematical framework of stochastic modified equation (SME), and show that the dynamics of stochastic ADMM is approximated by a class of stochastic differential equations with small noise parameters in the sense of weak approximation. The continuous-time analysis would uncover important analytical insights into the behaviors of the discrete-time algorithm, which are non-trivial to gain otherwise. For example, we could characterize the fluctuation of the solution paths precisely, and decide optimal stopping time to minimize the variance of solution paths.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge