Stochastic Aggregation in Graph Neural Networks

Paper and Code

Feb 26, 2021

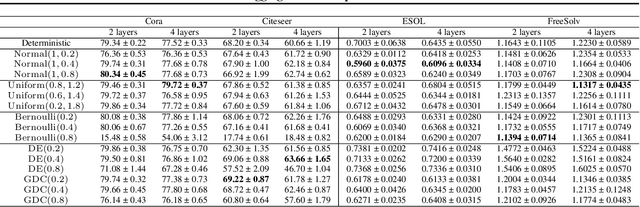

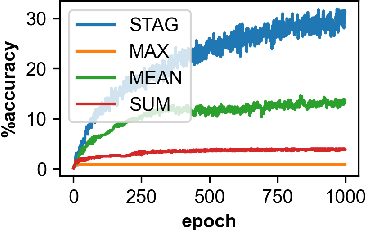

Graph neural networks (GNNs) manifest pathologies including over-smoothing and limited discriminating power as a result of suboptimally expressive aggregating mechanisms. We herein present a unifying framework for stochastic aggregation (STAG) in GNNs, where noise is (adaptively) injected into the aggregation process from the neighborhood to form node embeddings. We provide theoretical arguments that STAG models, with little overhead, remedy both of the aforementioned problems. In addition to fixed-noise models, we also propose probabilistic versions of STAG models and a variational inference framework to learn the noise posterior. We conduct illustrative experiments clearly targeting oversmoothing and multiset aggregation limitations. Furthermore, STAG enhances general performance of GNNs demonstrated by competitive performance in common citation and molecule graph benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge