Stealing Knowledge from Protected Deep Neural Networks Using Composite Unlabeled Data

Paper and Code

Dec 09, 2019

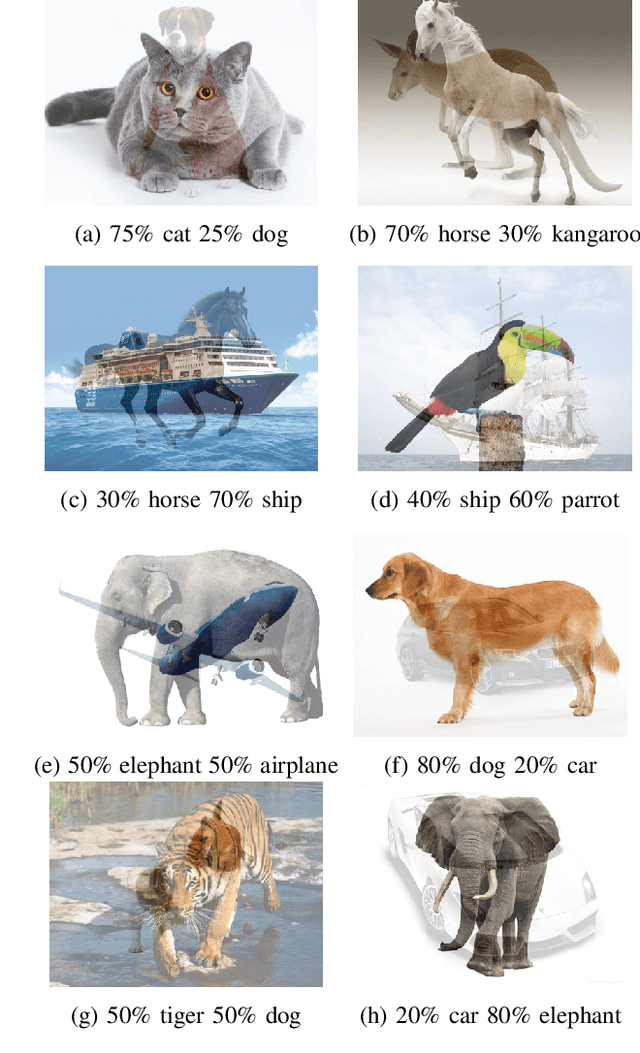

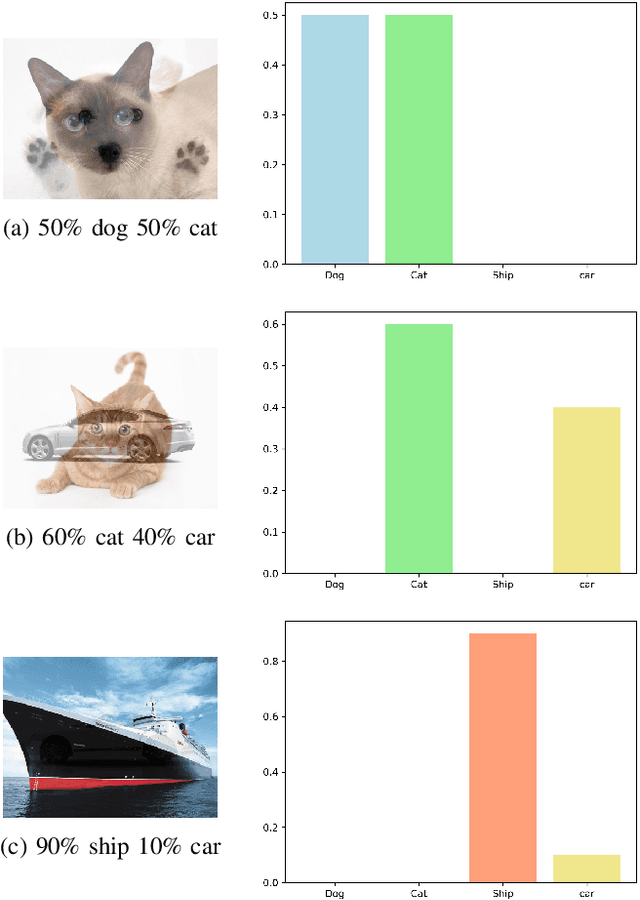

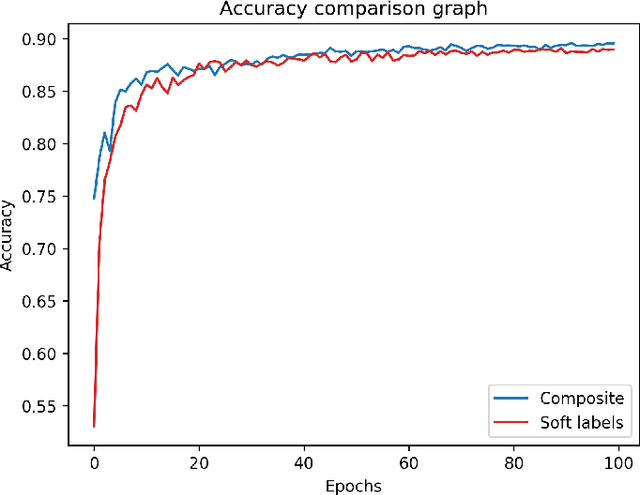

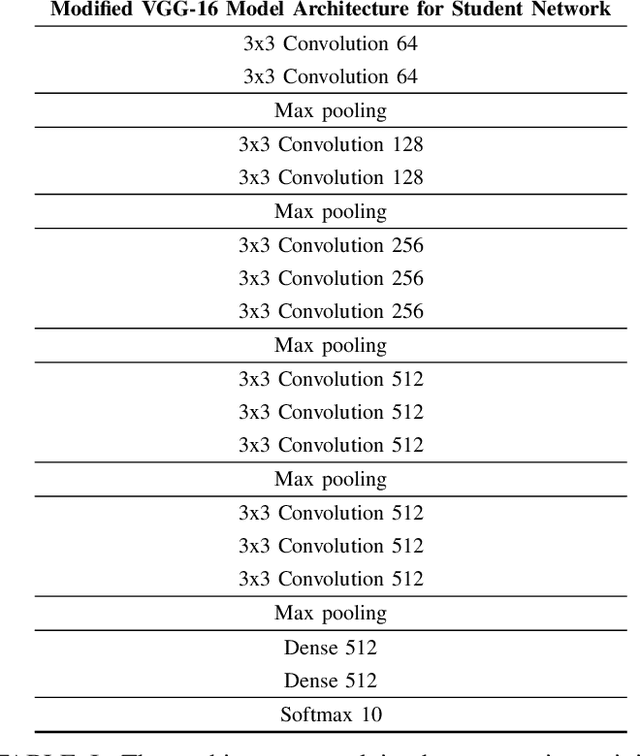

As state-of-the-art deep neural networks are deployed at the core of more advanced Al-based products and services, the incentive for copying them (i.e., their intellectual properties) by rival adversaries is expected to increase considerably over time. The best way to extract or steal knowledge from such networks is by querying them using a large dataset of random samples and recording their output, followed by training a student network to mimic these outputs, without making any assumption about the original networks. The most effective way to protect against such a mimicking attack is to provide only the classification result, without confidence values associated with the softmax layer.In this paper, we present a novel method for generating composite images for attacking a mentor neural network using a student model. Our method assumes no information regarding the mentor's training dataset, architecture, or weights. Further assuming no information regarding the mentor's softmax output values, our method successfully mimics the given neural network and steals all of its knowledge. We also demonstrate that our student network (which copies the mentor) is impervious to watermarking protection methods, and thus would not be detected as a stolen model.Our results imply, essentially, that all current neural networks are vulnerable to mimicking attacks, even if they do not divulge anything but the most basic required output, and that the student model which mimics them cannot be easily detected and singled out as a stolen copy using currently available techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge