Speedup deep learning models on GPU by taking advantage of efficient unstructured pruning and bit-width reduction

Paper and Code

Dec 28, 2021

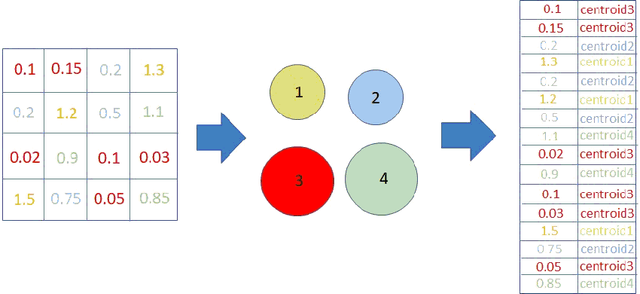

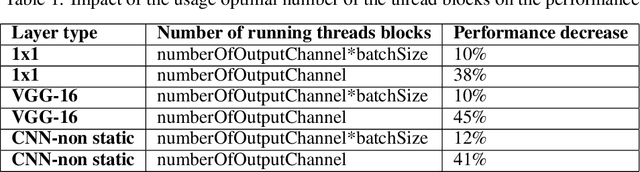

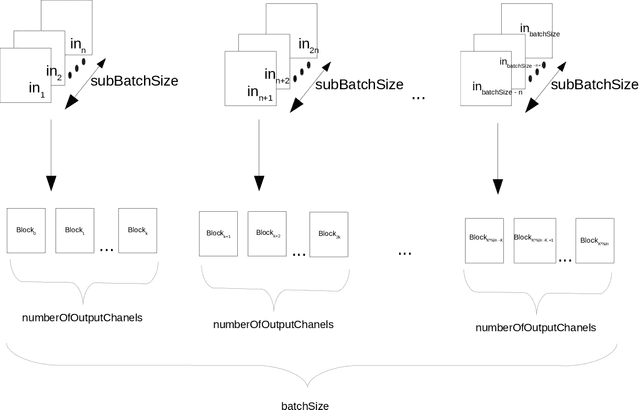

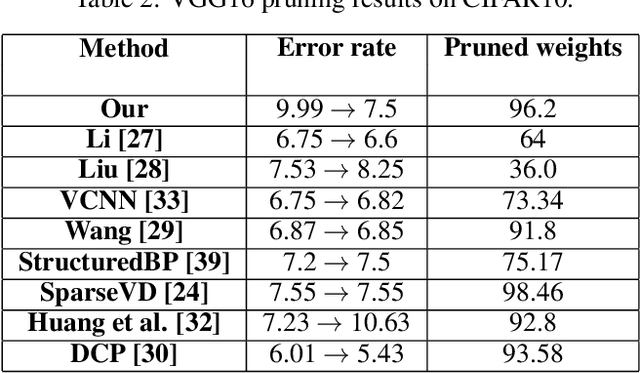

This work is focused on the pruning of some convolutional neural networks (CNNs) and improving theirs efficiency on graphic processing units (GPU) by using a direct sparse algorithm. The Nvidia deep neural network (cuDnn) library is the most effective implementations of deep learning (DL) algorithms for GPUs. GPUs are the most commonly used accelerators for deep learning computations. One of the most common techniques for improving the efficiency of CNN models is weight pruning and quantization. There are two main types of pruning: structural and non-structural. The first enables much easier acceleration on many type of accelerators, but with this type it is difficult to achieve a sparsity level and accuracy as high as that obtained with the second type. Non-structural pruning with retraining can generate a weight tensors up to 90% or more of sparsity in some deep CNN models. In this article the pruning algorithm is presented which makes it possible to achieve high sparsity levels without accuracy drop. In the next stage the linear and non-linear quantization is adapted for further time and footprint reduction. This paper is an extended of previously published paper concerning effective pruning techniques and present real models pruned with high sparsities and reduced precision which can achieve better performance than the CuDnn library.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge