Sparks: Inspiration for Science Writing using Language Models

Paper and Code

Oct 14, 2021

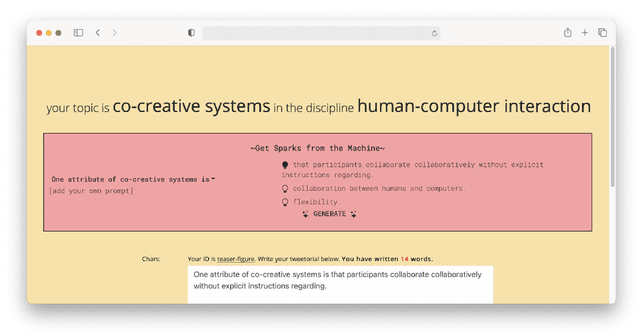

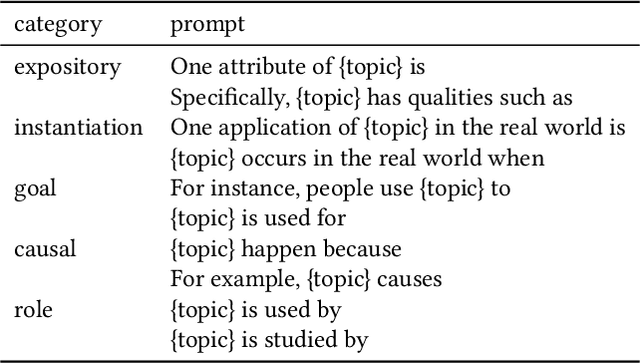

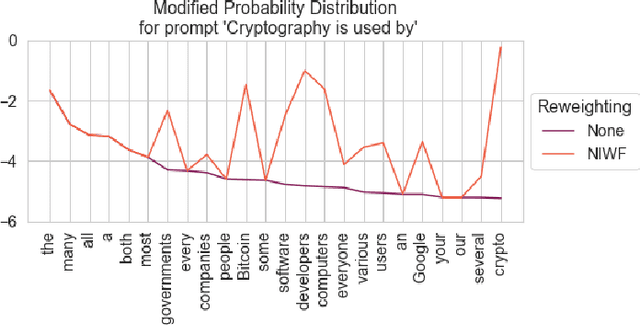

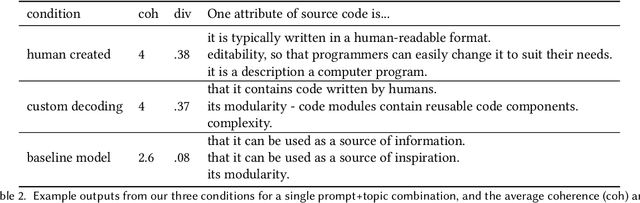

Large-scale language models are rapidly improving, performing well on a wide variety of tasks with little to no customization. In this work we investigate how language models can support science writing, a challenging writing task that is both open-ended and highly constrained. We present a system for generating "sparks", sentences related to a scientific concept intended to inspire writers. We find that our sparks are more coherent and diverse than a competitive language model baseline, and approach a human-created gold standard. In a study with 13 PhD students writing on topics of their own selection, we find three main use cases of sparks: aiding with crafting detailed sentences, providing interesting angles to engage readers, and demonstrating common reader perspectives. We also report on the various reasons sparks were considered unhelpful, and discuss how we might improve language models as writing support tools.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge