SPA: Stochastic Probability Adjustment for System Balance of Unsupervised SNNs

Paper and Code

Oct 19, 2020

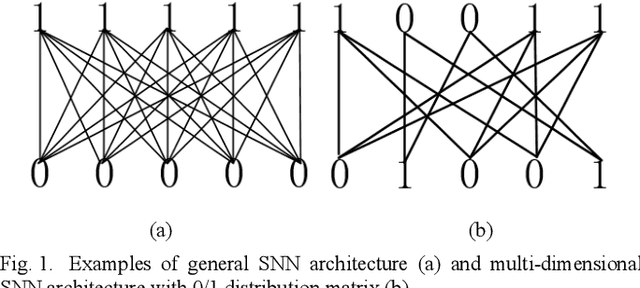

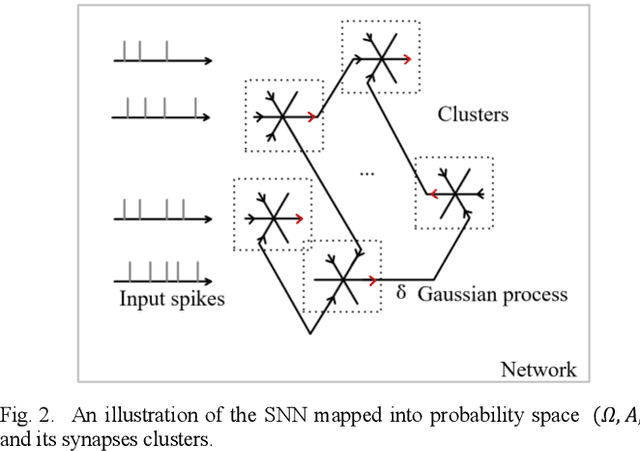

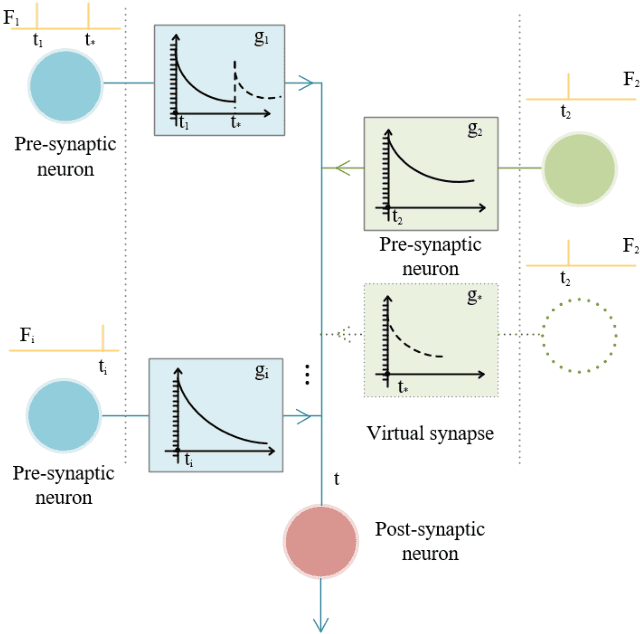

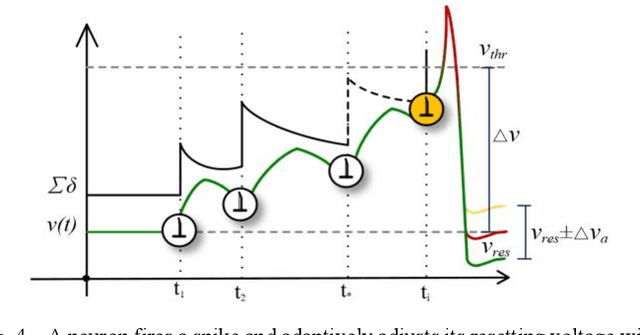

Spiking neural networks (SNNs) receive widespread attention because of their low-power hardware characteristic and brain-like signal response mechanism, but currently, the performance of SNNs is still behind Artificial Neural Networks (ANNs). We build an information theory-inspired system called Stochastic Probability Adjustment (SPA) system to reduce this gap. The SPA maps the synapses and neurons of SNNs into a probability space where a neuron and all connected pre-synapses are represented by a cluster. The movement of synaptic transmitter between different clusters is modeled as a Brownian-like stochastic process in which the transmitter distribution is adaptive at different firing phases. We experimented with a wide range of existing unsupervised SNN architectures and achieved consistent performance improvements. The improvements in classification accuracy have reached 1.99% and 6.29% on the MNIST and EMNIST datasets respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge