Solving Equations of Random Convex Functions via Anchored Regression

Paper and Code

Aug 13, 2018

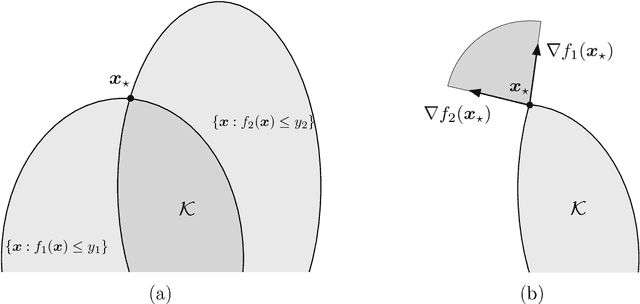

We consider the question of estimating a solution to a system of equations that involve convex nonlinearities, a problem that is common in machine learning and signal processing. Because of these nonlinearities, conventional estimators based on empirical risk minimization generally involve solving a non-convex optimization program. We propose anchored regression, a new approach based on convex programming that amounts to maximizing a linear functional (perhaps augmented by a regularizer) over a convex set. The proposed convex program is formulated in the natural space of the problem, and avoids the introduction of auxiliary variables, making it computationally favorable. Working in the native space also provides great flexibility as structural priors (e.g., sparsity) can be seamlessly incorporated. For our analysis, we model the equations as being drawn from a fixed set according to a probability law. Our main results provide guarantees on the accuracy of the estimator in terms of the number of equations we are solving, the amount of noise present, a measure of statistical complexity of the random equations, and the geometry of the regularizer at the true solution. We also provide recipes for constructing the anchor vector (that determines the linear functional to maximize) directly from the observed data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge