SOCRATES: Towards a Unified Platform for Neural Network Verification

Paper and Code

Jul 22, 2020

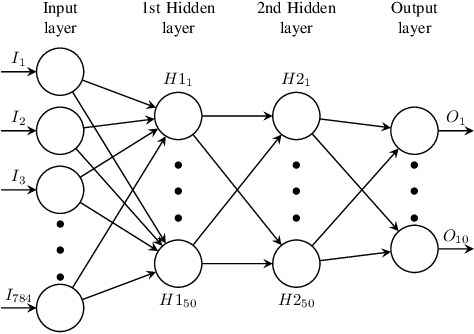

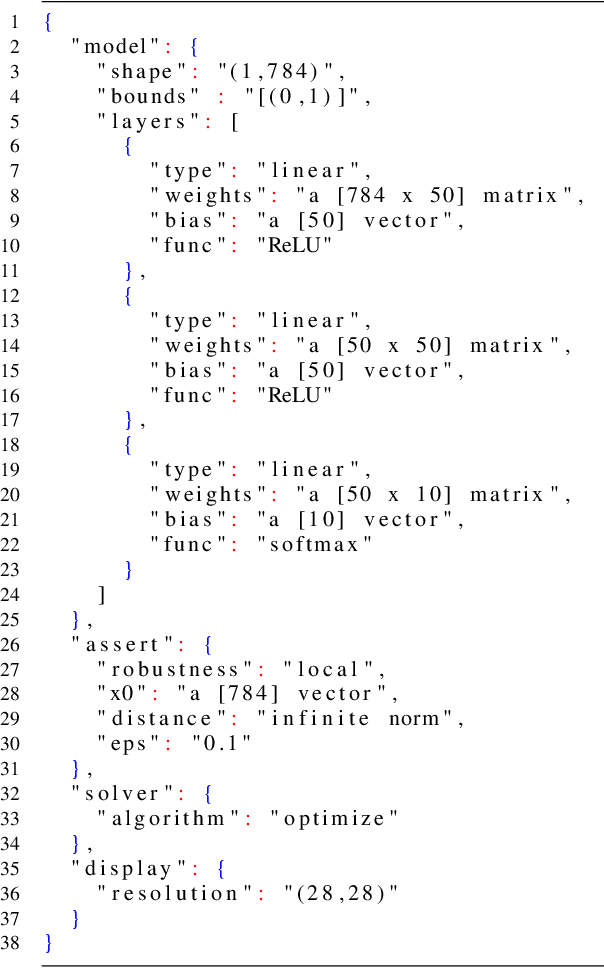

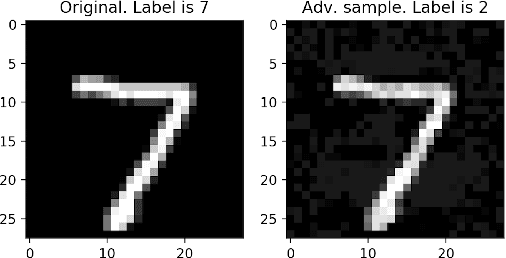

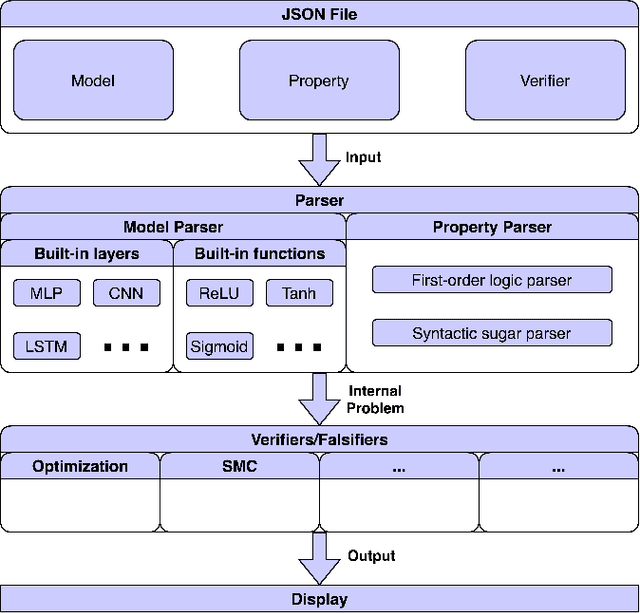

Studies show that neural networks, not unlike traditional programs, are subject to bugs, e.g., adversarial samples that cause classification errors and discriminatory instances that demonstrate the lack of fairness. Given that neural networks are increasingly applied in critical applications (e.g., self-driving cars, face recognition systems and personal credit rating systems), it is desirable that systematic methods are developed to verify or falsify neural networks against desirable properties. Recently, a number of approaches have been developed to verify neural networks. These efforts are however scattered (i.e., each approach tackles some restricted classes of neural networks against certain particular properties), incomparable (i.e., each approach has its own assumptions and input format) and thus hard to apply, reuse or extend. In this project, we aim to build a unified framework for developing verification techniques for neural networks. Towards this goal, we develop a platform called SOCRATES which supports a standardized format for a variety of neural network models, an assertion language for property specification as well as two novel algorithms for verifying or falsifying neural network models. SOCRATES is extensible and thus existing approaches can be easily integrated. Experiment results show that our platform offers better or comparable performance to state-of-the-art approaches. More importantly, it provides a platform for synergistic research on neural network verification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge