Smoothed functional-based gradient algorithms for off-policy reinforcement learning

Paper and Code

Jan 06, 2021

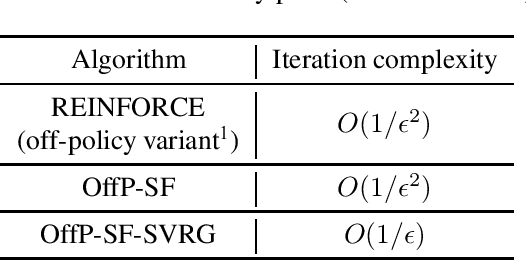

We consider the problem of control in an off-policy reinforcement learning (RL) context. We propose a policy gradient scheme that incorporates a smoothed functional-based gradient estimation scheme. We provide an asymptotic convergence guarantee for the proposed algorithm using the ordinary differential equation (ODE) approach. Further, we derive a non-asymptotic bound that quantifies the rate of convergence of the proposed algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge