SLAW: Scaled Loss Approximate Weighting for Efficient Multi-Task Learning

Paper and Code

Sep 16, 2021

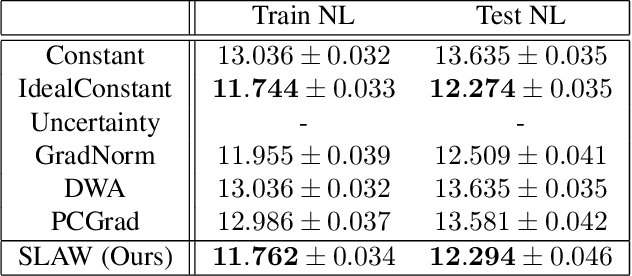

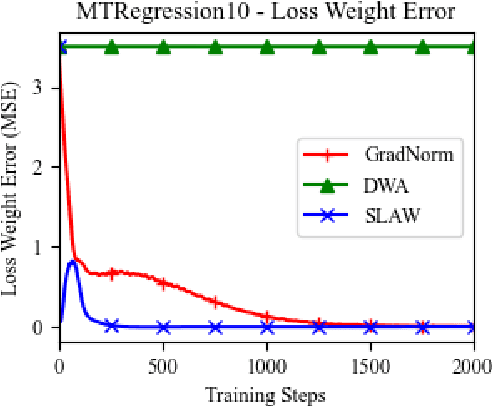

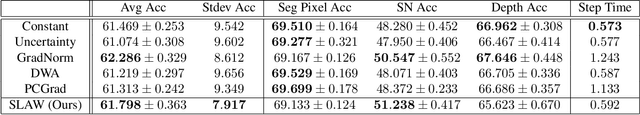

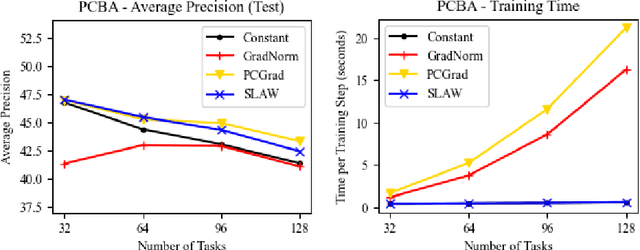

Multi-task learning (MTL) is a subfield of machine learning with important applications, but the multi-objective nature of optimization in MTL leads to difficulties in balancing training between tasks. The best MTL optimization methods require individually computing the gradient of each task's loss function, which impedes scalability to a large number of tasks. In this paper, we propose Scaled Loss Approximate Weighting (SLAW), a method for multi-task optimization that matches the performance of the best existing methods while being much more efficient. SLAW balances learning between tasks by estimating the magnitudes of each task's gradient without performing any extra backward passes. We provide theoretical and empirical justification for SLAW's estimation of gradient magnitudes. Experimental results on non-linear regression, multi-task computer vision, and virtual screening for drug discovery demonstrate that SLAW is significantly more efficient than strong baselines without sacrificing performance and applicable to a diverse range of domains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge