Simulator-based explanation and debugging of hazard-triggering events in DNN-based safety-critical systems

Paper and Code

Apr 01, 2022

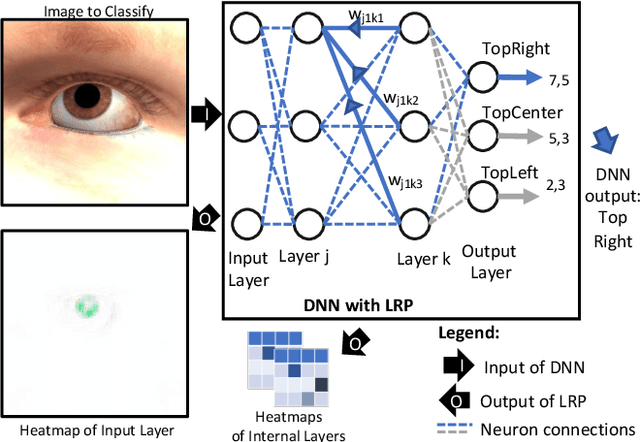

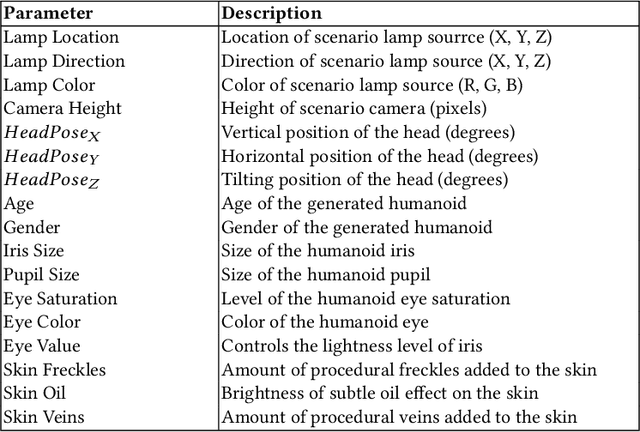

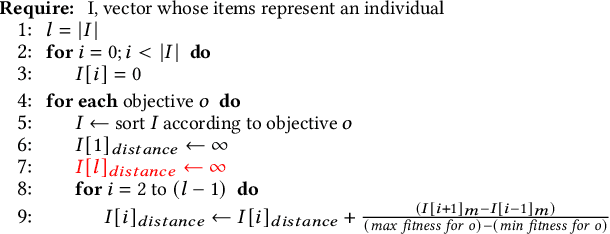

When Deep Neural Networks (DNNs) are used in safety-critical systems, engineers should determine the safety risks associated with DNN errors observed during testing. For DNNs processing images, engineers visually inspect all error-inducing images to determine common characteristics among them. Such characteristics correspond to hazard-triggering events (e.g., low illumination) that are essential inputs for safety analysis. Though informative, such activity is expensive and error-prone. To support such safety analysis practices, we propose SEDE, a technique that generates readable descriptions for commonalities in error-inducing, real-world images and improves the DNN through effective retraining. SEDE leverages the availability of simulators, which are commonly used for cyber-physical systems. SEDE relies on genetic algorithms to drive simulators towards the generation of images that are similar to error-inducing, real-world images in the test set; it then leverages rule learning algorithms to derive expressions that capture commonalities in terms of simulator parameter values. The derived expressions are then used to generate additional images to retrain and improve the DNN. With DNNs performing in-car sensing tasks, SEDE successfully characterized hazard-triggering events leading to a DNN accuracy drop. Also, SEDE enabled retraining to achieve significant improvements in DNN accuracy, up to 18 percentage points.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge