Side-Channel Analysis of OpenVINO-based Neural Network Models

Paper and Code

Jul 23, 2024

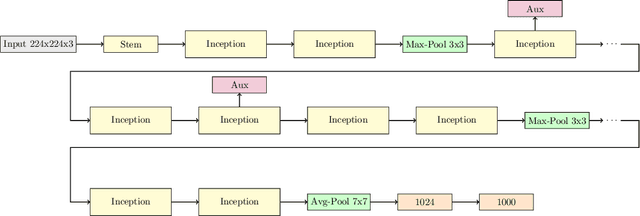

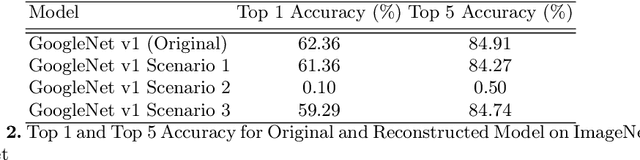

Embedded devices with neural network accelerators offer great versatility for their users, reducing the need to use cloud-based services. At the same time, they introduce new security challenges in the area of hardware attacks, the most prominent being side-channel analysis (SCA). It was shown that SCA can recover model parameters with a high accuracy, posing a threat to entities that wish to keep their models confidential. In this paper, we explore the susceptibility of quantized models implemented in OpenVINO, an embedded framework for deploying neural networks on embedded and Edge devices. We show that it is possible to recover model parameters with high precision, allowing the recovered model to perform very close to the original one. Our experiments on GoogleNet v1 show only a 1% difference in the Top 1 and a 0.64% difference in the Top 5 accuracies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge