Should XAI Nudge Human Decisions with Explanation Biasing?

Paper and Code

Jun 11, 2024

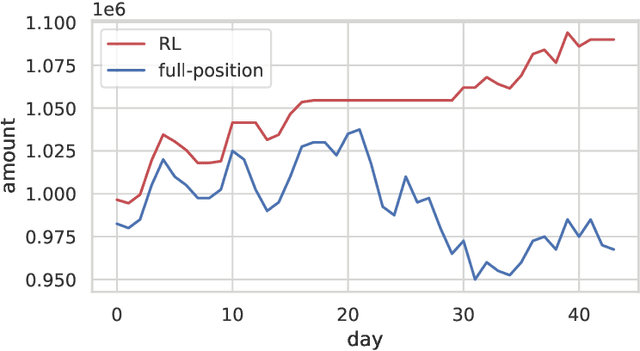

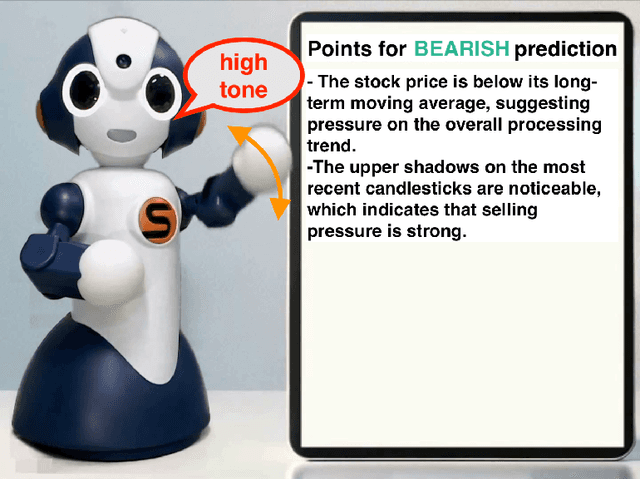

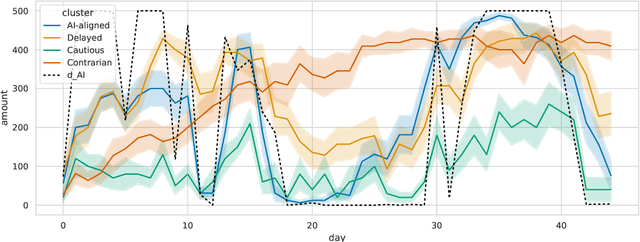

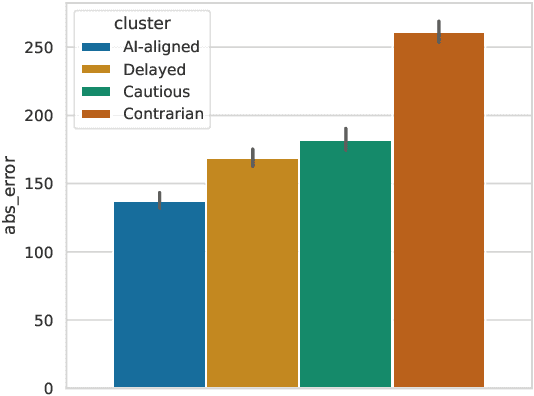

This paper reviews our previous trials of Nudge-XAI, an approach that introduces automatic biases into explanations from explainable AIs (XAIs) with the aim of leading users to better decisions, and it discusses the benefits and challenges. Nudge-XAI uses a user model that predicts the influence of providing an explanation or emphasizing it and attempts to guide users toward AI-suggested decisions without coercion. The nudge design is expected to enhance the autonomy of users, reduce the risk associated with an AI making decisions without users' full agreement, and enable users to avoid AI failures. To discuss the potential of Nudge-XAI, this paper reports a post-hoc investigation of previous experimental results using cluster analysis. The results demonstrate the diversity of user behavior in response to Nudge-XAI, which supports our aim of enhancing user autonomy. However, it also highlights the challenge of users who distrust AI and falsely make decisions contrary to AI suggestions, suggesting the need for personalized adjustment of the strength of nudges to make this approach work more generally.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge