Sheaf theory: from deep geometry to deep learning

Paper and Code

Feb 21, 2025

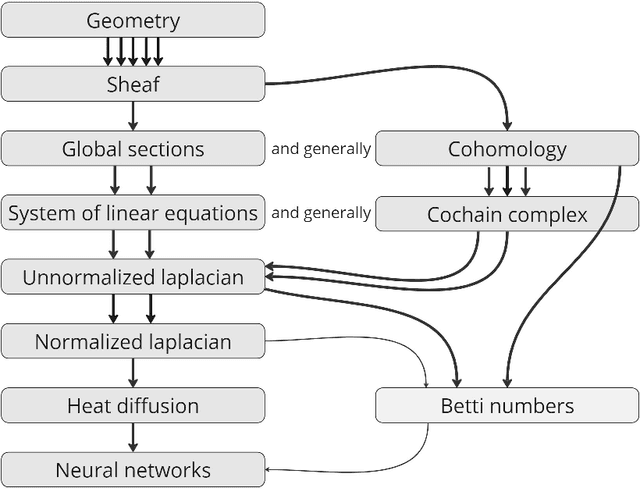

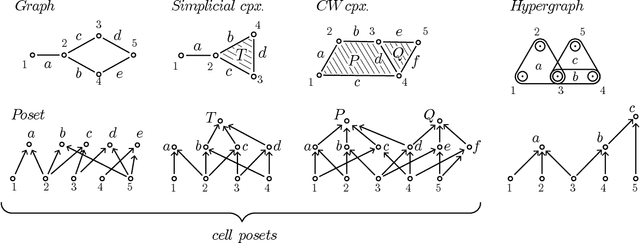

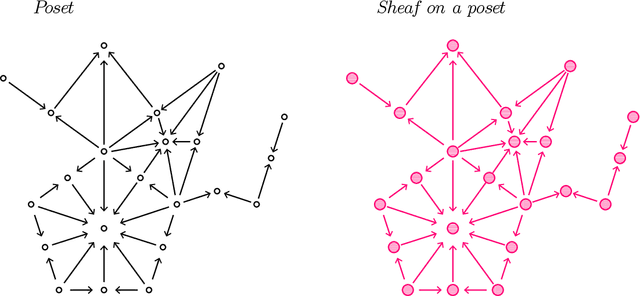

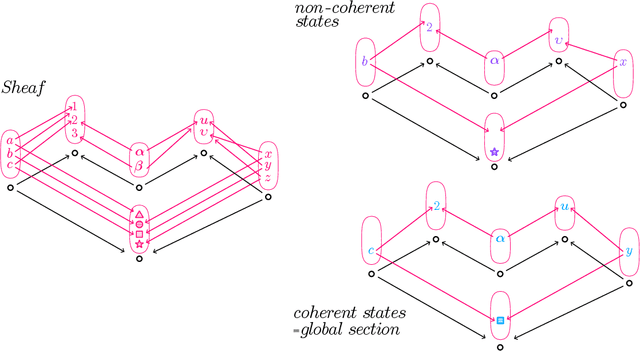

This paper provides an overview of the applications of sheaf theory in deep learning, data science, and computer science in general. The primary text of this work serves as a friendly introduction to applied and computational sheaf theory accessible to those with modest mathematical familiarity. We describe intuitions and motivations underlying sheaf theory shared by both theoretical researchers and practitioners, bridging classical mathematical theory and its more recent implementations within signal processing and deep learning. We observe that most notions commonly considered specific to cellular sheaves translate to sheaves on arbitrary posets, providing an interesting avenue for further generalization of these methods in applications, and we present a new algorithm to compute sheaf cohomology on arbitrary finite posets in response. By integrating classical theory with recent applications, this work reveals certain blind spots in current machine learning practices. We conclude with a list of problems related to sheaf-theoretic applications that we find mathematically insightful and practically instructive to solve. To ensure the exposition of sheaf theory is self-contained, a rigorous mathematical introduction is provided in appendices which moves from an introduction of diagrams and sheaves to the definition of derived functors, higher order cohomology, sheaf Laplacians, sheaf diffusion, and interconnections of these subjects therein.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge