Sharp Inequalities for $f$-divergences

Paper and Code

Oct 15, 2013

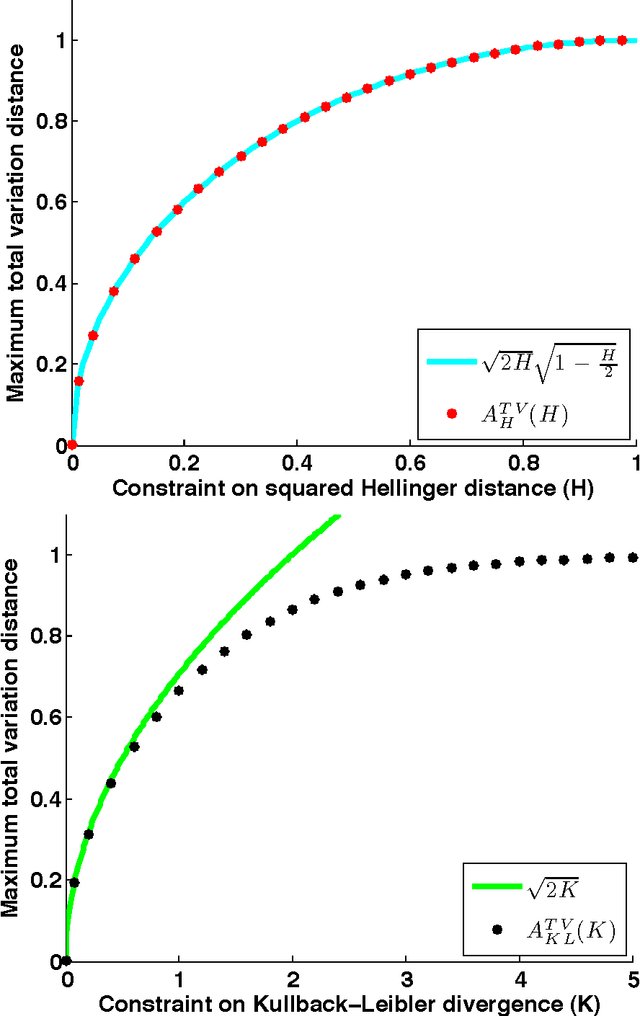

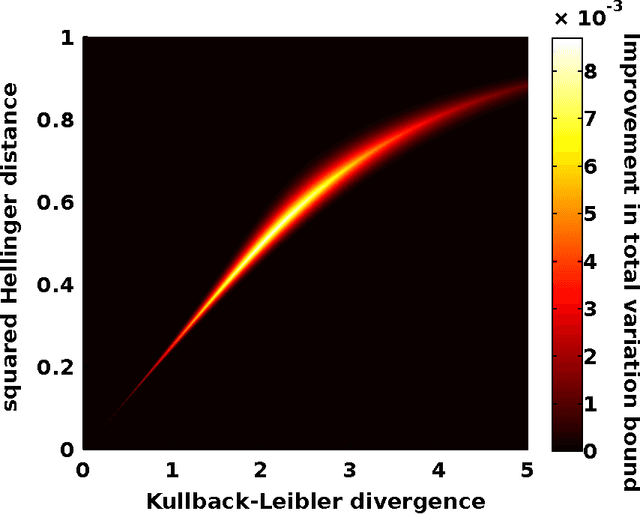

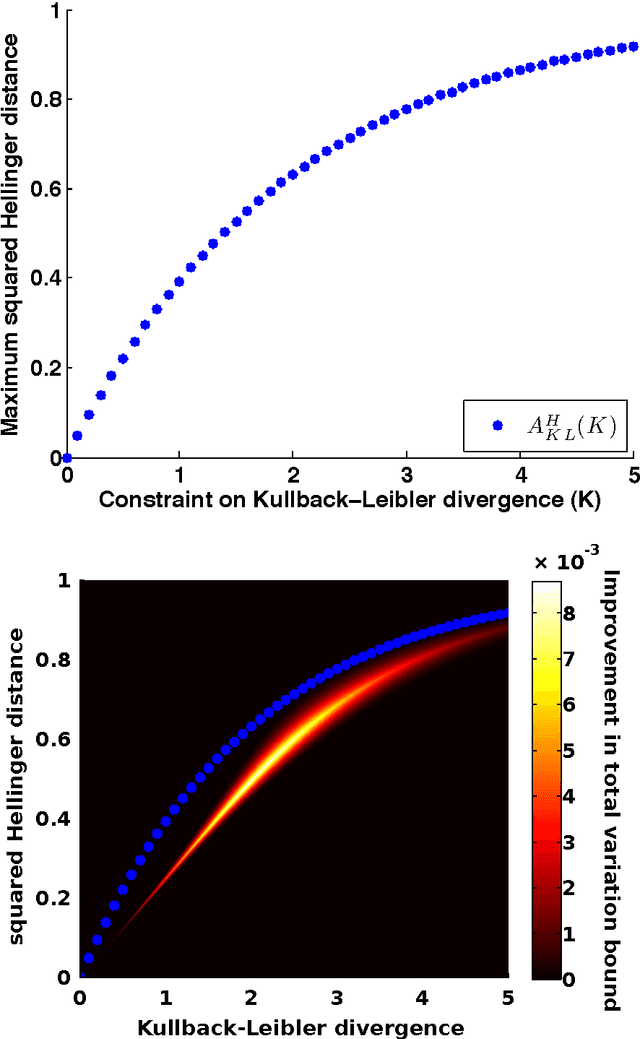

$f$-divergences are a general class of divergences between probability measures which include as special cases many commonly used divergences in probability, mathematical statistics and information theory such as Kullback-Leibler divergence, chi-squared divergence, squared Hellinger distance, total variation distance etc. In this paper, we study the problem of maximizing or minimizing an $f$-divergence between two probability measures subject to a finite number of constraints on other $f$-divergences. We show that these infinite-dimensional optimization problems can all be reduced to optimization problems over small finite dimensional spaces which are tractable. Our results lead to a comprehensive and unified treatment of the problem of obtaining sharp inequalities between $f$-divergences. We demonstrate that many of the existing results on inequalities between $f$-divergences can be obtained as special cases of our results and we also improve on some existing non-sharp inequalities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge