Sequence to Logic with Copy and Cache

Paper and Code

Jul 19, 2018

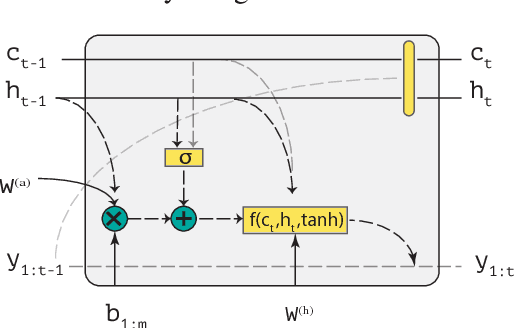

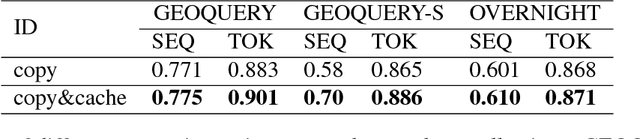

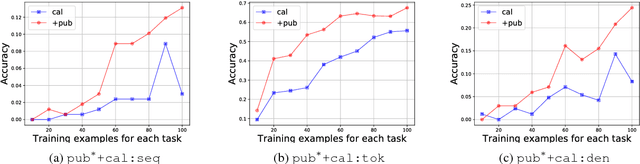

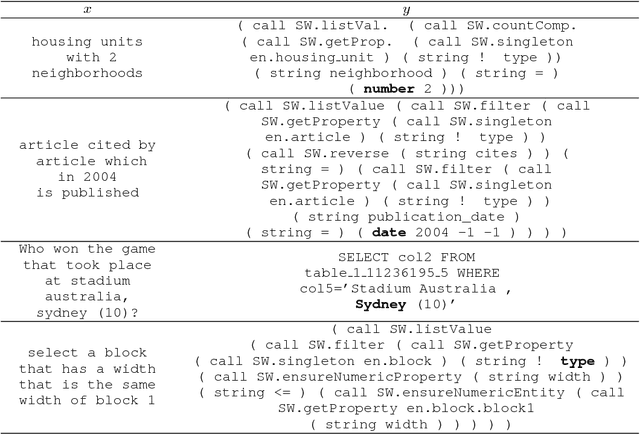

Generating logical form equivalents of human language is a fresh way to employ neural architectures where long short-term memory effectively captures dependencies in both encoder and decoder units. The logical form of the sequence usually preserves information from the natural language side in the form of similar tokens, and recently a copying mechanism has been proposed which increases the probability of outputting tokens from the source input through decoding. In this paper we propose a caching mechanism as a more general form of the copying mechanism which also weighs all the words from the source vocabulary according to their relation to the current decoding context. Our results confirm that the proposed method achieves improvements in sequence/token-level accuracy on sequence to logical form tasks. Further experiments on cross-domain adversarial attacks show substantial improvements when using the most influential examples of other domains for training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge