Semi-Supervised Learning with the Deep Rendering Mixture Model

Paper and Code

Dec 06, 2016

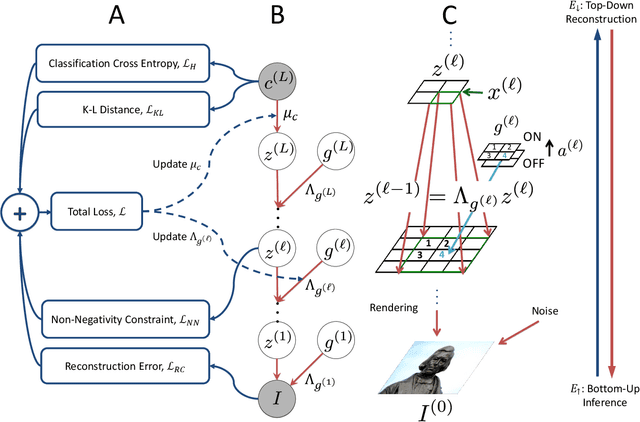

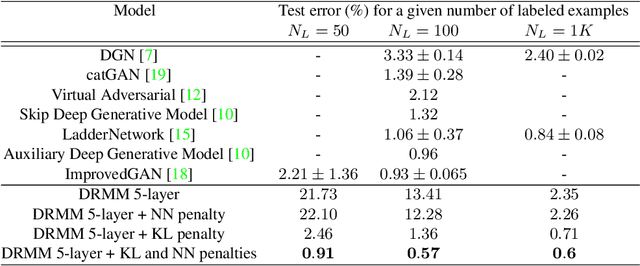

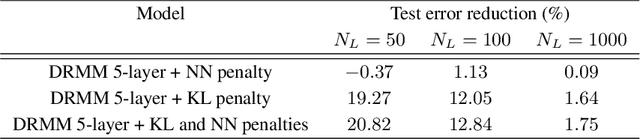

Semi-supervised learning algorithms reduce the high cost of acquiring labeled training data by using both labeled and unlabeled data during learning. Deep Convolutional Networks (DCNs) have achieved great success in supervised tasks and as such have been widely employed in the semi-supervised learning. In this paper we leverage the recently developed Deep Rendering Mixture Model (DRMM), a probabilistic generative model that models latent nuisance variation, and whose inference algorithm yields DCNs. We develop an EM algorithm for the DRMM to learn from both labeled and unlabeled data. Guided by the theory of the DRMM, we introduce a novel non-negativity constraint and a variational inference term. We report state-of-the-art performance on MNIST and SVHN and competitive results on CIFAR10. We also probe deeper into how a DRMM trained in a semi-supervised setting represents latent nuisance variation using synthetically rendered images. Taken together, our work provides a unified framework for supervised, unsupervised, and semi-supervised learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge