Semantic Hypergraphs

Paper and Code

Aug 28, 2019

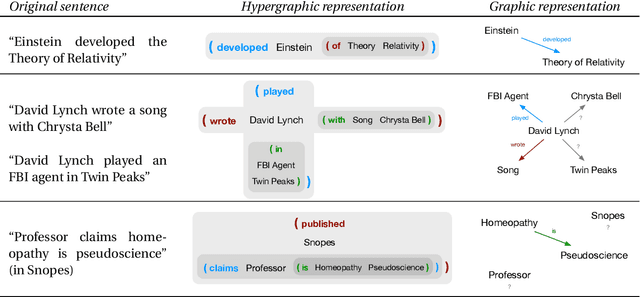

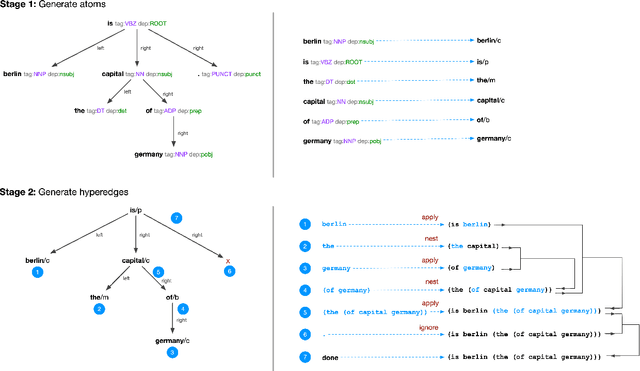

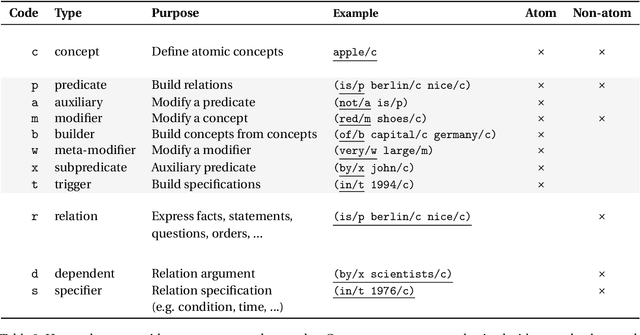

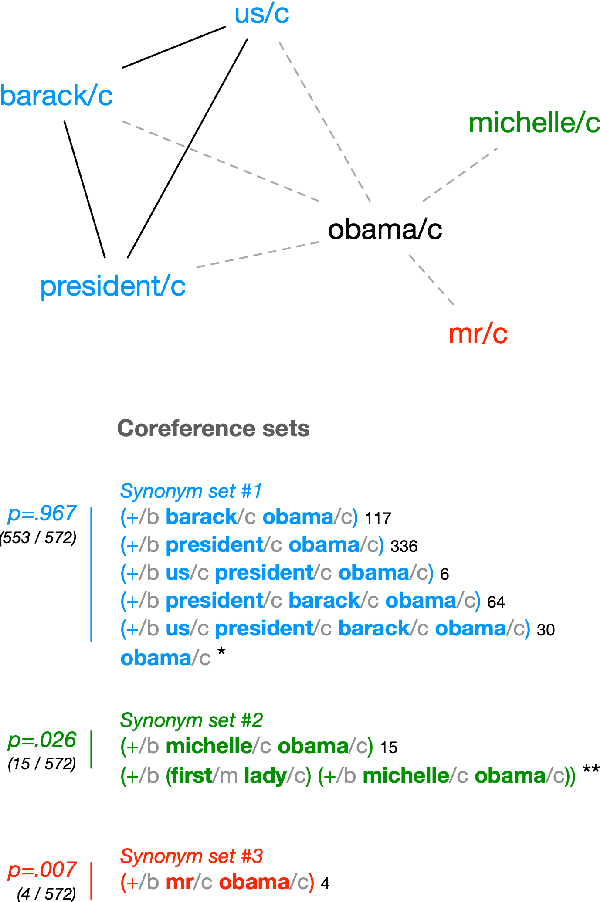

Existing computational methods for the analysis of corpora of text in natural language are still far from approaching a human level of understanding. We attempt to advance the state of the art by introducing a model and algorithmic framework to transform text into recursively structured data. We apply this to the analysis of news titles extracted from a social news aggregation website. We show that a recursive ordered hypergraph is a sufficiently generic structure to represent significant number of fundamental natural language constructs, with advantages over conventional approaches such as semantic graphs. We present a pipeline of transformations from the output of conventional NLP algorithms to such hypergraphs, which we denote as semantic hypergraphs. The features of these transformations include the creation of new concepts from existing ones, the organisation of statements into regular structures of predicates followed by an arbitrary number of entities and the ability to represent statements about other statements. We demonstrate knowledge inference from the hypergraph, identifying claims and expressions of conflicts, along with their participating actors and topics. We show how this enables the actor-centric summarization of conflicts, comparison of topics of claims between actors and networks of conflicts between actors in the context of a given topic. On the whole, we propose a hypergraphic knowledge representation model that can be used to provide effective overviews of a large corpus of text in natural language.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge