Self-Calibration of the Offset Between GPS and Semantic Map Frames for Robust Localization

Paper and Code

May 25, 2021

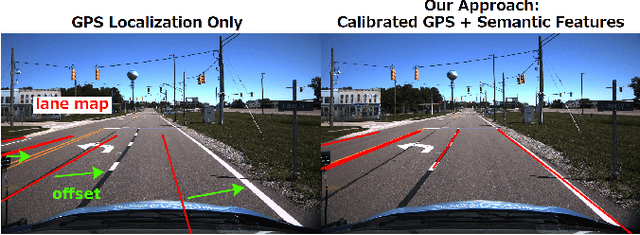

In self-driving, standalone GPS is generally considered to have insufficient positioning accuracy to stay in lane. Instead, many turn to LIDAR localization, but this comes at the expense of building LIDAR maps that can be costly to maintain. Another possibility is to use semantic cues such as lane lines and traffic lights to achieve localization, but these are usually not continuously visible. This issue can be remedied by combining semantic cues with GPS to fill in the gaps. However, due to elapsed time between mapping and localization, the live GPS frame can be offset from the semantic map frame, requiring calibration. In this paper, we propose a robust semantic localization algorithm that self-calibrates for the offset between the live GPS and semantic map frames by exploiting common semantic cues, including traffic lights and lane markings. We formulate the problem using a modified Iterated Extended Kalman Filter, which incorporates GPS and camera images for semantic cue detection via Convolutional Neural Networks. Experimental results show that our proposed algorithm achieves decimetre-level accuracy comparable to typical LIDAR localization performance and is robust against sparse semantic features and frequent GPS dropouts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge