SEEK: model extraction attack against hybrid secure inference protocols

Paper and Code

Sep 14, 2022

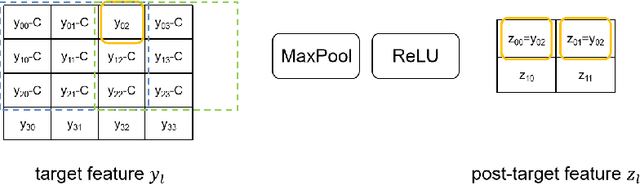

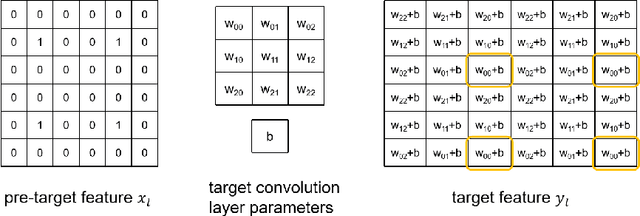

Security concerns about a machine learning model used in a prediction-as-a-service include the privacy of the model, the query and the result. Secure inference solutions based on homomorphic encryption (HE) and/or multiparty computation (MPC) have been developed to protect all the sensitive information. One of the most efficient type of solution utilizes HE for linear layers, and MPC for non-linear layers. However, for such hybrid protocols with semi-honest security, an adversary can malleate the intermediate features in the inference process, and extract model information more effectively than methods against inference service in plaintext. In this paper, we propose SEEK, a general extraction method for hybrid secure inference services outputing only class labels. This method can extract each layer of the target model independently, and is not affected by the depth of the model. For ResNet-18, SEEK can extract a parameter with less than 50 queries on average, with average error less than $0.03\%$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge