Scalable Bayesian neural networks by layer-wise input augmentation

Paper and Code

Oct 26, 2020

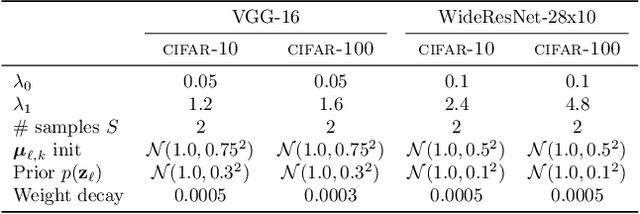

We introduce implicit Bayesian neural networks, a simple and scalable approach for uncertainty representation in deep learning. Standard Bayesian approach to deep learning requires the impractical inference of the posterior distribution over millions of parameters. Instead, we propose to induce a distribution that captures the uncertainty over neural networks by augmenting each layer's inputs with latent variables. We present appropriate input distributions and demonstrate state-of-the-art performance in terms of calibration, robustness and uncertainty characterisation over large-scale, multi-million parameter image classification tasks.

* 8 pages

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge