Sampling Agnostic Feature Representation for Long-Term Person Re-identification

Paper and Code

Sep 20, 2022

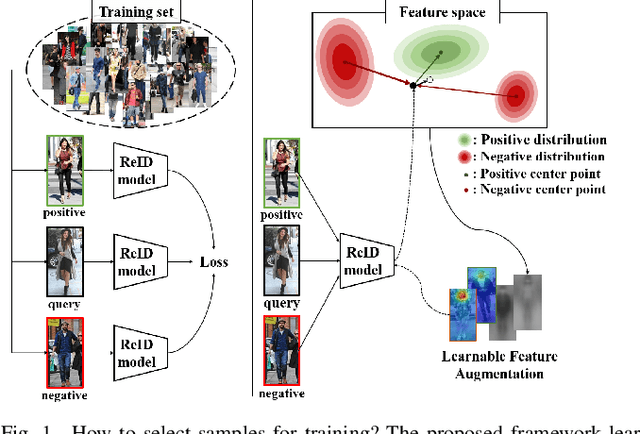

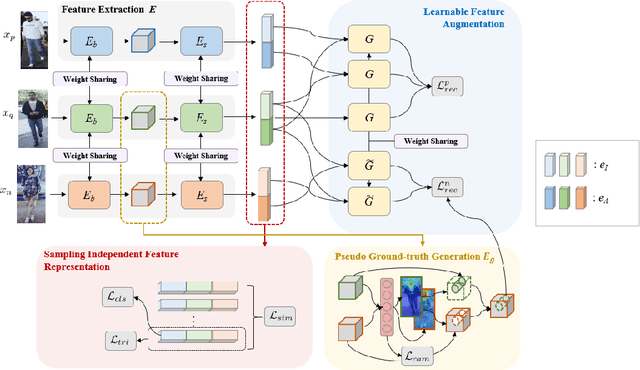

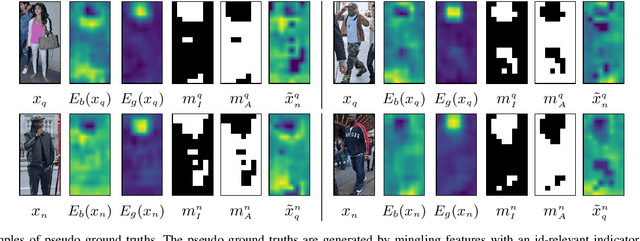

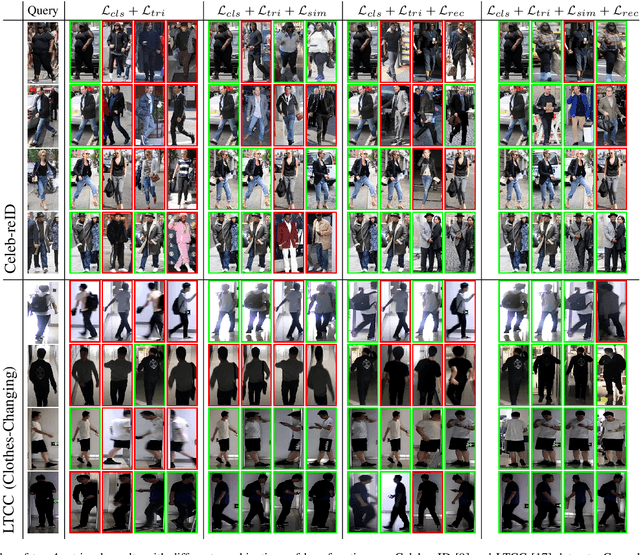

Person re-identification is a problem of identifying individuals across non-overlapping cameras. Although remarkable progress has been made in the re-identification problem, it is still a challenging problem due to appearance variations of the same person as well as other people of similar appearance. Some prior works solved the issues by separating features of positive samples from features of negative ones. However, the performances of existing models considerably depend on the characteristics and statistics of the samples used for training. Thus, we propose a novel framework named sampling independent robust feature representation network~(SirNet) that learns disentangled feature embedding from randomly chosen samples. A carefully designed sampling independent maximum discrepancy loss is introduced to model samples of the same person as a cluster. As a result, the proposed framework can generate additional hard negatives/positives using the learned features, which results in better discriminability from other identities. Extensive experimental results on large-scale benchmark datasets verify that the proposed model is more effective than prior state-of-the-art models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge