Saliency Attention and Semantic Similarity-Driven Adversarial Perturbation

Paper and Code

Jun 18, 2024

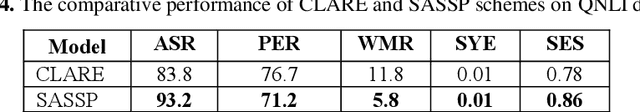

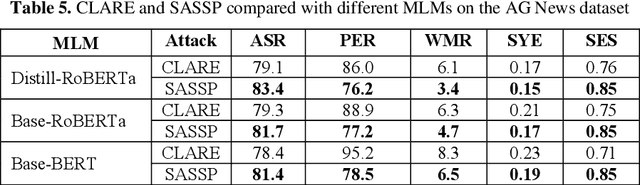

In this paper, we introduce an enhanced textual adversarial attack method, known as Saliency Attention and Semantic Similarity driven adversarial Perturbation (SASSP). The proposed scheme is designed to improve the effectiveness of contextual perturbations by integrating saliency, attention, and semantic similarity. Traditional adversarial attack methods often struggle to maintain semantic consistency and coherence while effectively deceiving target models. Our proposed approach addresses these challenges by incorporating a three-pronged strategy for word selection and perturbation. First, we utilize a saliency-based word selection to prioritize words for modification based on their importance to the model's prediction. Second, attention mechanisms are employed to focus perturbations on contextually significant words, enhancing the attack's efficacy. Finally, an advanced semantic similarity-checking method is employed that includes embedding-based similarity and paraphrase detection. By leveraging models like Sentence-BERT for embedding similarity and fine-tuned paraphrase detection models from the Sentence Transformers library, the scheme ensures that the perturbed text remains contextually appropriate and semantically consistent with the original. Empirical evaluations demonstrate that SASSP generates adversarial examples that not only maintain high semantic fidelity but also effectively deceive state-of-the-art natural language processing models. Moreover, in comparison to the original scheme of contextual perturbation CLARE, SASSP has yielded a higher attack success rate and lower word perturbation rate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge