Safety-Oriented Pruning and Interpretation of Reinforcement Learning Policies

Paper and Code

Sep 16, 2024

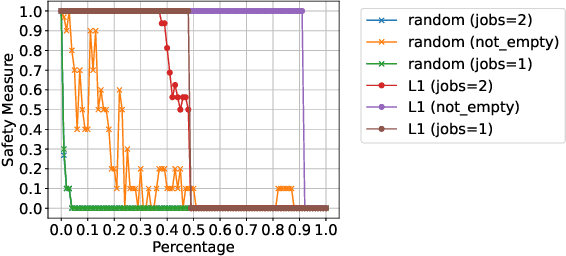

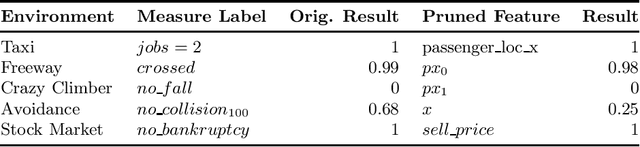

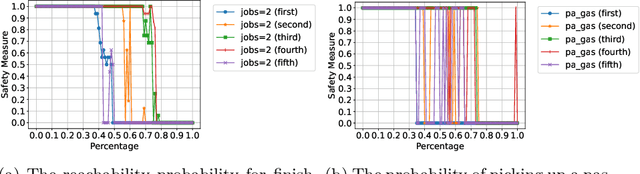

Pruning neural networks (NNs) can streamline them but risks removing vital parameters from safe reinforcement learning (RL) policies. We introduce an interpretable RL method called VERINTER, which combines NN pruning with model checking to ensure interpretable RL safety. VERINTER exactly quantifies the effects of pruning and the impact of neural connections on complex safety properties by analyzing changes in safety measurements. This method maintains safety in pruned RL policies and enhances understanding of their safety dynamics, which has proven effective in multiple RL settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge