Robust Market Making via Adversarial Reinforcement Learning

Paper and Code

Mar 03, 2020

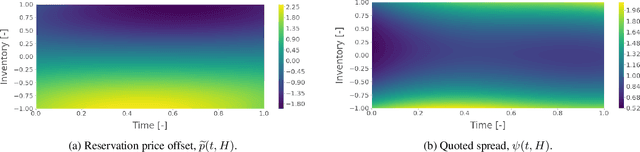

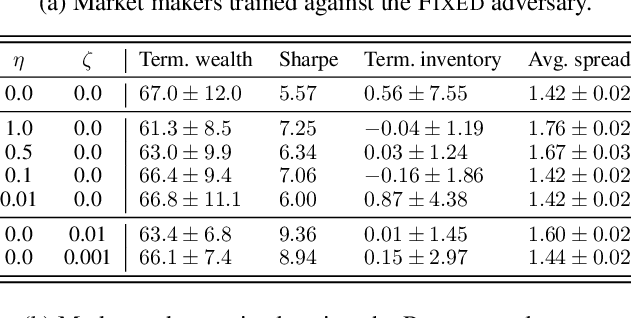

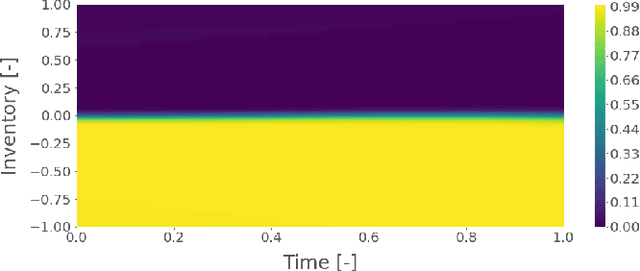

We show that adversarial reinforcement learning (ARL) can be used to produce market marking agents that are robust to adversarial and adaptively chosen market conditions. To apply ARL, we turn the well-studied single-agent model of Avellaneda and Stoikov [2008] into a discrete-time zero-sum game between a market maker and adversary, a proxy for other market participants who would like to profit at the market maker's expense. We empirically compare two conventional single-agent RL agents with ARL, and show that our ARL approach leads to: 1) the emergence of naturally risk-averse behaviour without constraints or domain-specific penalties; 2) significant improvements in performance across a set of standard metrics, evaluated with or without an adversary in the test environment, and; 3) improved robustness to model uncertainty. We empirically demonstrate that our ARL method consistently converges, and we prove for several special cases that the profiles that we converge to are Nash equilibria in a corresponding simplified single-stage game.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge