Robust Image Classification Using A Low-Pass Activation Function and DCT Augmentation

Paper and Code

Jul 18, 2020

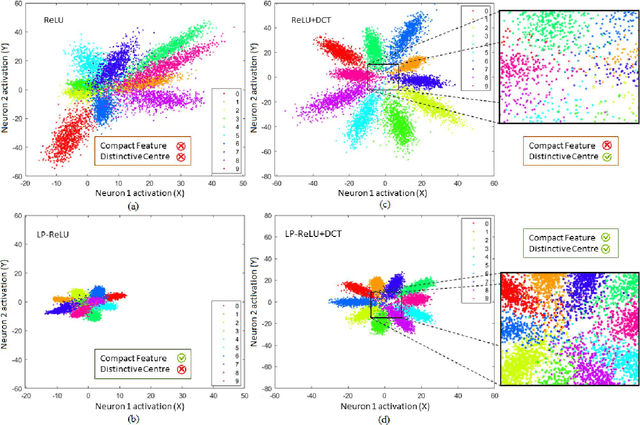

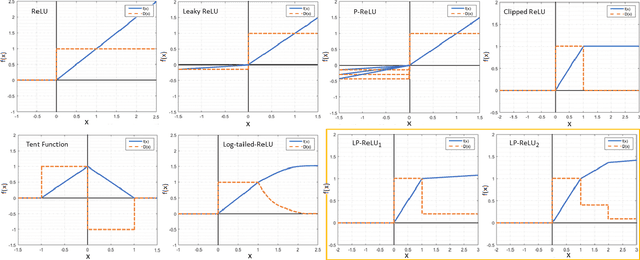

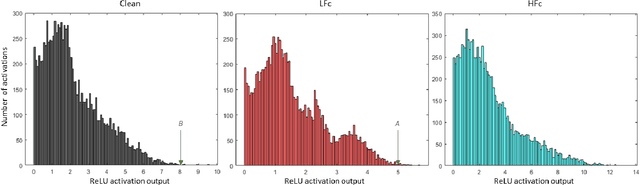

Convolutional Neural Network's (CNN's) performance disparity on clean and corrupted datasets has recently been noticed. In this work, we analyse common corruptions from a frequency perspective, i.e., High Frequency corruptions or HFc (e.g., noise) and Low Frequency corruptions or LFc (e.g., blur). A common signal processing solution to HFc is low-pass filtering. Intriguingly, the de-facto Activation Function (AF) used in modern CNNs, i.e., ReLU does not have any filtering mechanism resulting in unstable performance on HFc. In this work, we propose a family of novel AFs with low-pass filtering to improve robustness against HFc (we call it Low-Pass ReLU or LP-ReLU). To deal with LFc, we further enhance the AFs with Discrete Cosine Transform (DCT) based augmentation. LP-ReLU coupled with DCT augmentation, enables a deep network to tackle a variety of corruptions. We evaluate our method's performance on CIFAR-10-C and Tiny ImageNet-C datasets and achieve improvements of 5.1% and 7.2% in accuracy respectively compared to the State-Of-The-Art (SOTA). We further evaluate our method's performance stability on a variety of perturbations available in CIFAR-10-P and Tiny ImageNet-P. We also achieve new SOTA results in these experiments. We also devise a decision space visualisation process to further strengthen the understanding regarding CNN's lack of robustness against corrupted data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge