Robust Asynchronous and Network-Independent Cooperative Learning

Paper and Code

Oct 20, 2020

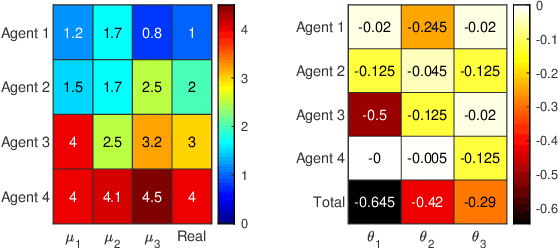

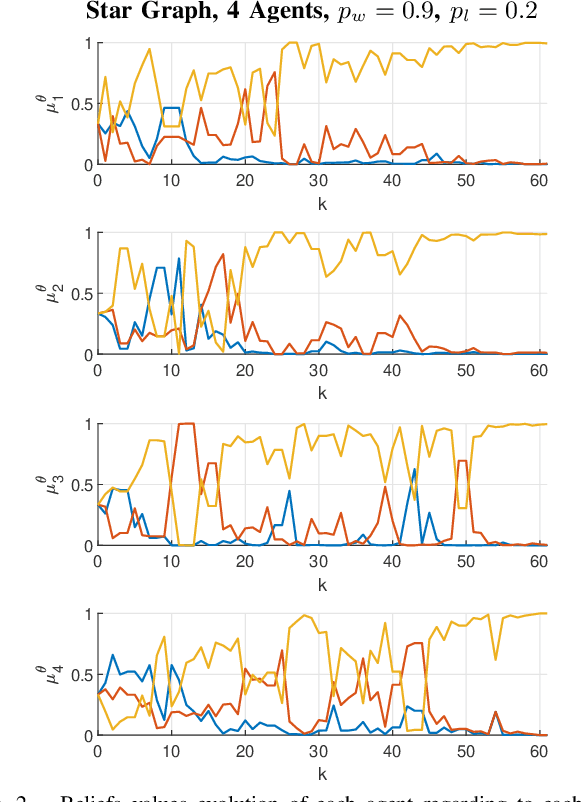

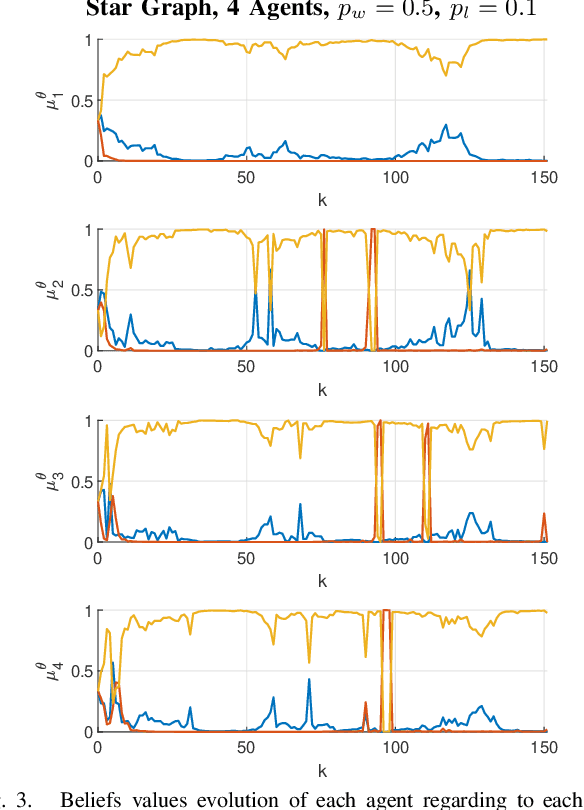

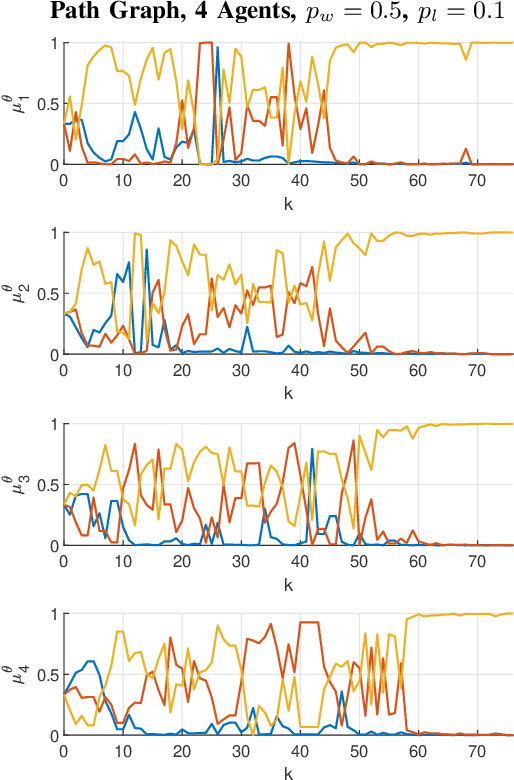

We consider the model of cooperative learning via distributed non-Bayesian learning, where a network of agents tries to jointly agree on a hypothesis that best described a sequence of locally available observations. Building upon recently proposed weak communication network models, we propose a robust cooperative learning rule that allows asynchronous communications, message delays, unpredictable message losses, and directed communication among nodes. We show that our proposed learning dynamics guarantee that all agents in the network will have an asymptotic exponential decay of their beliefs on the wrong hypothesis, indicating that the beliefs of all agents will concentrate on the optimal hypotheses. Numerical experiments provide evidence on a number of network setups.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge