Robust and fine-grained prosody control of end-to-end speech synthesis

Paper and Code

Nov 06, 2018

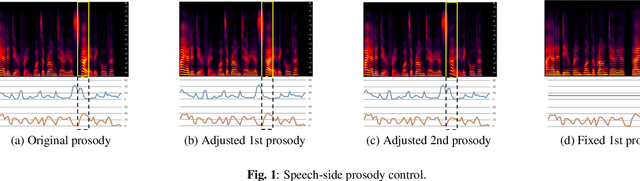

We propose prosody embeddings for emotional and expressive speech synthesis networks. The proposed methods introduce temporal structures in the embedding networks, which enable fine-grained control of the speaking style of the synthesized speech. The temporal structures could be designed either in speech-side or text-side, which lead different control resolution in time. The prosody embedding networks are plugged into end-to-end speech synthesis networks, and trained without any other supervision except the target speech for synthesizing. The prosody embedding networks learned to extract prosodic features. By adjusting the learned prosody features, we could change the pitch and amplitude of the synthesized speech both in frame level and phoneme level. We also introduce temporal normalization of prosody embeddings, which shows better robustness against speaker perturbation in prosody transfer tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge