RHAML: Rendezvous-based Hierarchical Architecture for Mutual Localization

Paper and Code

May 20, 2024

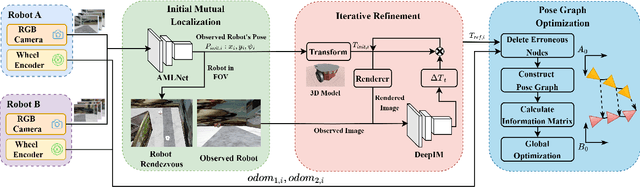

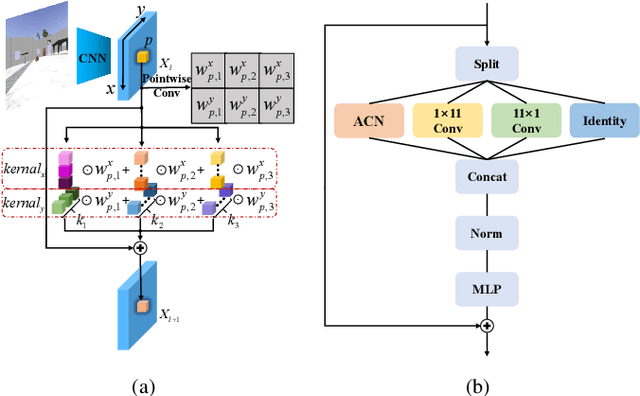

Mutual localization serves as the foundation for collaborative perception and task assignment in multi-robot systems. Effectively utilizing limited onboard sensors for mutual localization between marker-less robots is a worthwhile goal. However, due to inadequate consideration of large scale variations of the observed robot and localization refinement, previous work has shown limited accuracy when robots are equipped only with RGB cameras. To enhance the precision of localization, this paper proposes a novel rendezvous-based hierarchical architecture for mutual localization (RHAML). Firstly, to learn multi-scale robot features, anisotropic convolutions are introduced into the network, yielding initial localization results. Then, the iterative refinement module with rendering is employed to adjust the observed robot poses. Finally, the pose graph is conducted to globally optimize all localization results, which takes into account multi-frame observations. Therefore, a flexible architecture is provided that allows for the selection of appropriate modules based on requirements. Simulations demonstrate that RHAML effectively addresses the problem of multi-robot mutual localization, achieving translation errors below 2 cm and rotation errors below 0.5 degrees when robots exhibit 5 m of depth variation. Moreover, its practical utility is validated by applying it to map fusion when multi-robots explore unknown environments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge