Restore from Restored: Single-image Inpainting

Paper and Code

Oct 25, 2021

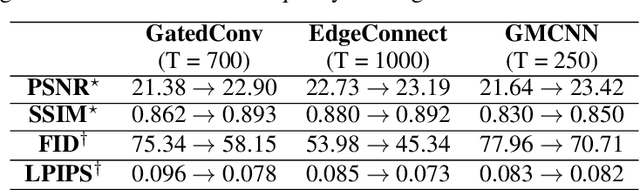

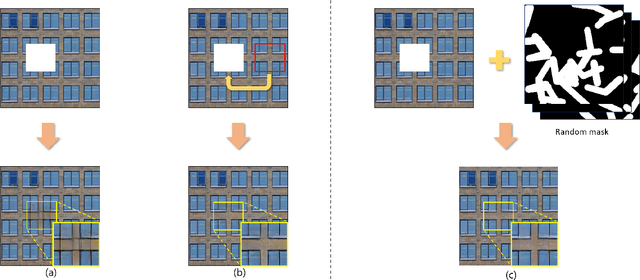

Recent image inpainting methods have shown promising results due to the power of deep learning, which can explore external information available from the large training dataset. However, many state-of-the-art inpainting networks are still limited in exploiting internal information available in the given input image at test time. To mitigate this problem, we present a novel and efficient self-supervised fine-tuning algorithm that can adapt the parameters of fully pre-trained inpainting networks without using ground-truth target images. We update the parameters of the pre-trained state-of-the-art inpainting networks by utilizing existing self-similar patches (i.e., self-exemplars) within the given input image without changing the network architecture and improve the inpainting quality by a large margin. Qualitative and quantitative experimental results demonstrate the superiority of the proposed algorithm, and we achieve state-of-the-art inpainting results on publicly available benchmark datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge