Resolving Referring Expressions in Images With Labeled Elements

Paper and Code

Oct 25, 2018

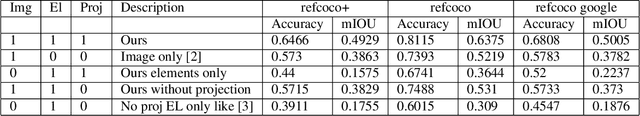

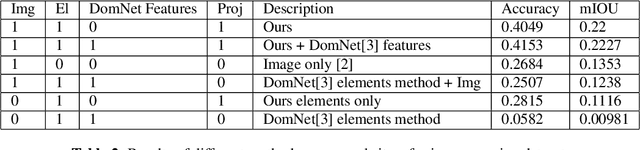

Images may have elements containing text and a bounding box associated with them, for example, text identified via optical character recognition on a computer screen image, or a natural image with labeled objects. We present an end-to-end trainable architecture to incorporate the information from these elements and the image to segment/identify the part of the image a natural language expression is referring to. We calculate an embedding for each element and then project it onto the corresponding location (i.e., the associated bounding box) of the image feature map. We show that this architecture gives an improvement in resolving referring expressions, over only using the image, and other methods that incorporate the element information. We demonstrate experimental results on the referring expression datasets based on COCO, and on a webpage image referring expression dataset that we developed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge