Representation Learning with Fine-grained Patterns

Paper and Code

May 19, 2020

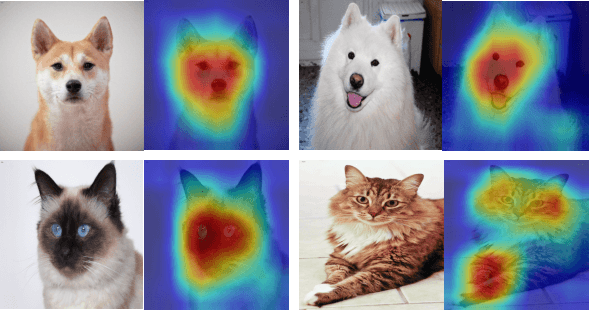

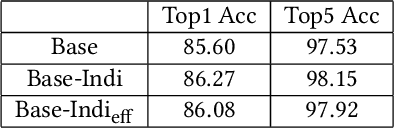

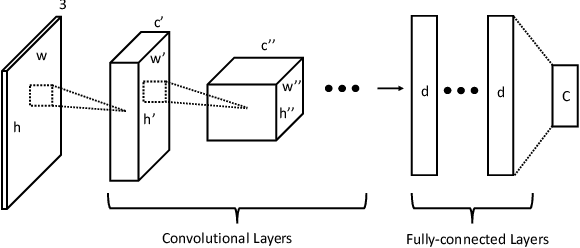

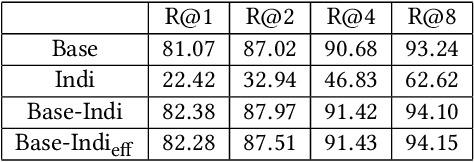

With the development of computational power and techniques for data collection, deep learning demonstrates a superior performance over many existing algorithms on benchmark data sets. Many efforts have been devoted to studying the mechanism of deep learning. One of important observations is that deep learning can learn the discriminative patterns from raw materials directly in a task-dependent manner. It makes the patterns obtained by deep learning outperform hand-crafted features significantly. However, those patterns can be misled by the training task when the target task is different. In this work, we investigate a prevalent problem in real-world applications, where the training set only accesses to the supervised information from superclasses but the target task is defined on fine-grained classes. Each superclass can contain multiple fine-grained classes. In this scenario, fine-grained patterns are essential to classify examples from fine-grained classes while they can be neglected when training only with labels from superclasses. To mitigate the challenge, we propose the algorithm to explore the fine-grained patterns sufficiently without additional supervised information. Besides, our analysis indicates that the performance of learned patterns on the fine-grained classes can be theoretically guaranteed. Finally, an efficient algorithm is developed to reduce the cost of optimization. The experiments on real-world data sets verify that the propose algorithm can significantly improve the performance on the fine-grained classes with information from superclasses only.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge