Remaining Useful Life Estimation Under Uncertainty with Causal GraphNets

Paper and Code

Nov 23, 2020

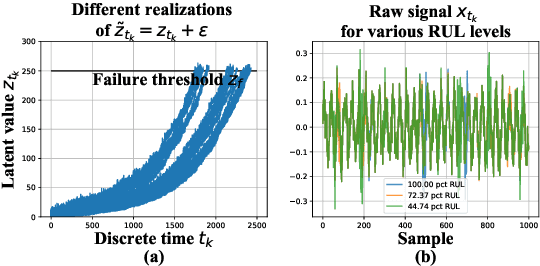

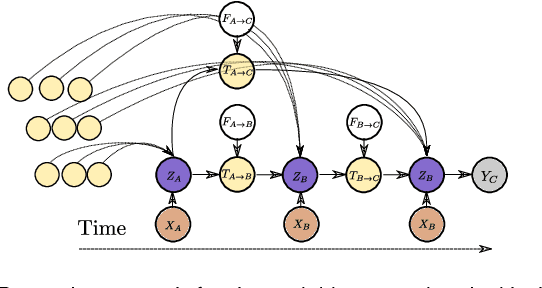

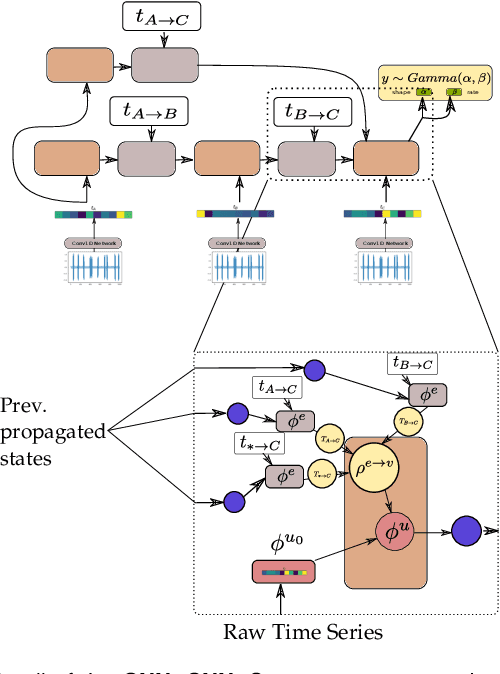

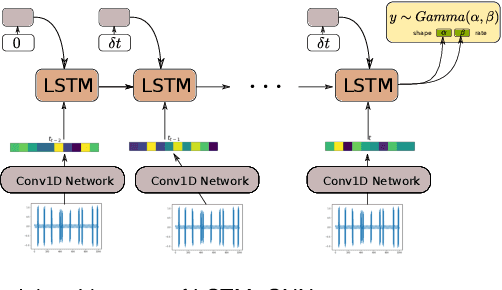

In this work, a novel approach for the construction and training of time series models is presented that deals with the problem of learning on large time series with non-equispaced observations, which at the same time may possess features of interest that span multiple scales. The proposed method is appropriate for constructing predictive models for non-stationary stochastic time series.The efficacy of the method is demonstrated on a simulated stochastic degradation dataset and on a real-world accelerated life testing dataset for ball-bearings. The proposed method, which is based on GraphNets, implicitly learns a model that describes the evolution of the system at the level of a state-vector rather than of a raw observation. The proposed approach is compared to a recurrent network with a temporal convolutional feature extractor head (RNN-tCNN) which forms a known viable alternative for the problem context considered. Finally, by taking advantage of recent advances in the computation of reparametrization gradients for learning probability distributions, a simple yet effective technique for representing prediction uncertainty as a Gamma distribution over remaining useful life predictions is employed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge