Relationship Explainable Multi-objective Optimization Via Vector Value Function Based Reinforcement Learning

Paper and Code

Oct 02, 2019

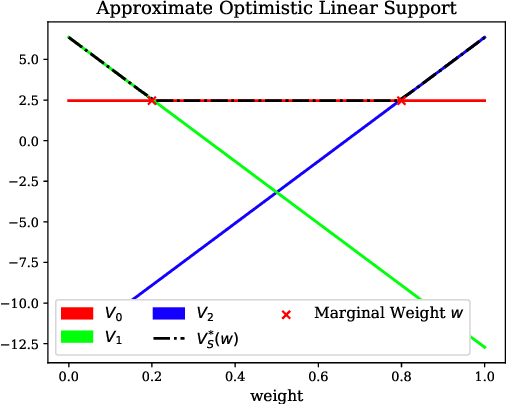

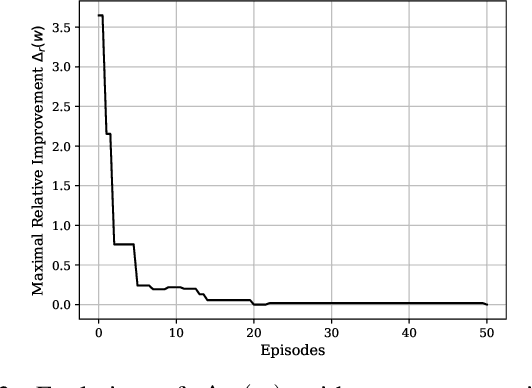

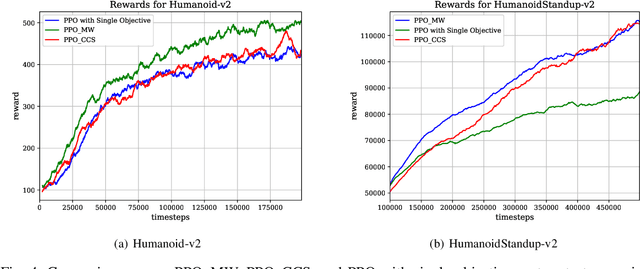

Solving multi-objective optimization problems is important in various applications where users are interested in obtaining optimal policies subject to multiple, yet often conflicting objectives. A typical approach to obtain optimal policies is to first construct a loss function that is based on the scalarization of individual objectives, and then find the optimal policy that minimizes the loss. However, optimizing the scalarized (and weighted) loss does not necessarily provide a guarantee of high performance on each possibly conflicting objective. In this paper, we propose a vector value based reinforcement learning approach that seeks to explicitly learn the inter-objective relationship and optimize multiple objectives based on the learned relationship. In particular, the proposed method is to first define relationship matrix, a mathematical representation of the inter-objective relationship, and then create one actor and multiple critics that can co-learn the relationship matrix and action selection. The proposed approach can quantify the inter-objective relationship via reinforcement learning when the impact of one objective on another is unknown a prior. We also provide rigorous convergence analysis of the proposed approach and present a quantitative evaluation of the approach based on two testing scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge