Reinforcement Learning-based Dynamic Service Placement in Vehicular Networks

Paper and Code

Jun 01, 2021

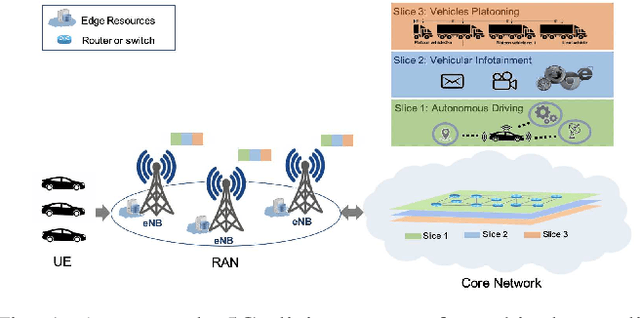

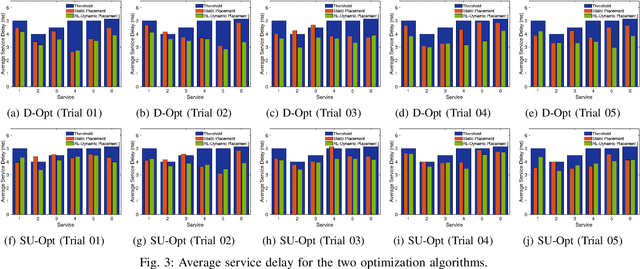

The emergence of technologies such as 5G and mobile edge computing has enabled provisioning of different types of services with different resource and service requirements to the vehicles in a vehicular network.The growing complexity of traffic mobility patterns and dynamics in the requests for different types of services has made service placement a challenging task. A typical static placement solution is not effective as it does not consider the traffic mobility and service dynamics. In this paper, we propose a reinforcement learning-based dynamic (RL-Dynamic) service placement framework to find the optimal placement of services at the edge servers while considering the vehicle's mobility and dynamics in the requests for different types of services. We use SUMO and MATLAB to carry out simulation experiments. In our learning framework, for the decision module, we consider two alternative objective functions-minimizing delay and minimizing edge server utilization. We developed an ILP based problem formulation for the two objective functions. The experimental results show that 1) compared to static service placement, RL-based dynamic service placement achieves fair utilization of edge server resources and low service delay, and 2) compared to delay-optimized placement, server utilization optimized placement utilizes resources more effectively, achieving higher fairness with lower edge-server utilization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge