Reinforcement Learning-Based Coverage Path Planning with Implicit Cellular Decomposition

Paper and Code

Oct 18, 2021

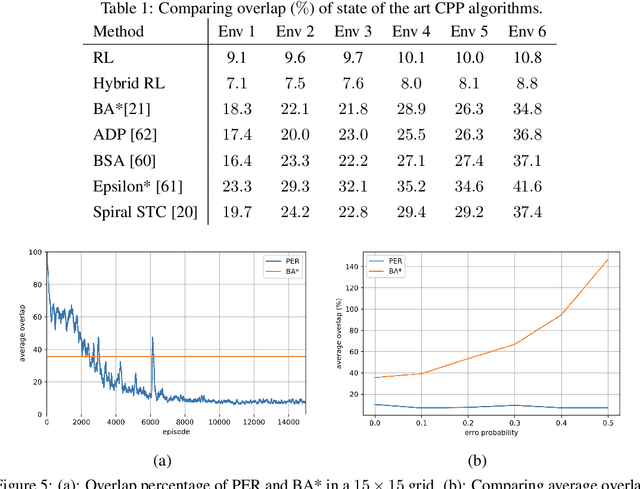

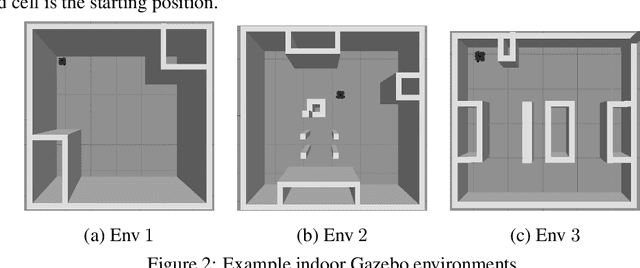

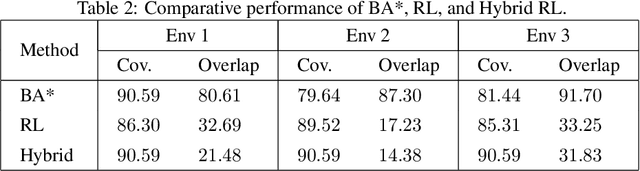

Coverage path planning in a generic known environment is shown to be NP-hard. When the environment is unknown, it becomes more challenging as the robot is required to rely on its online map information built during coverage for planning its path. A significant research effort focuses on designing heuristic or approximate algorithms that achieve reasonable performance. Such algorithms have sub-optimal performance in terms of covering the area or the cost of coverage, e.g., coverage time or energy consumption. In this paper, we provide a systematic analysis of the coverage problem and formulate it as an optimal stopping time problem, where the trade-off between coverage performance and its cost is explicitly accounted for. Next, we demonstrate that reinforcement learning (RL) techniques can be leveraged to solve the problem computationally. To this end, we provide some technical and practical considerations to facilitate the application of the RL algorithms and improve the efficiency of the solutions. Finally, through experiments in grid world environments and Gazebo simulator, we show that reinforcement learning-based algorithms efficiently cover realistic unknown indoor environments, and outperform the current state of the art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge