Regularly Updated Deterministic Policy Gradient Algorithm

Paper and Code

Jul 01, 2020

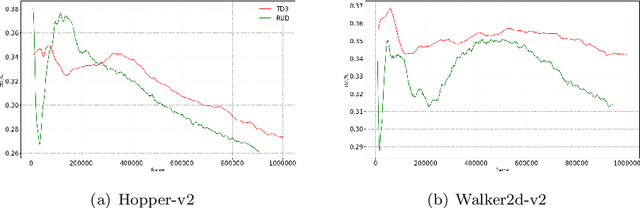

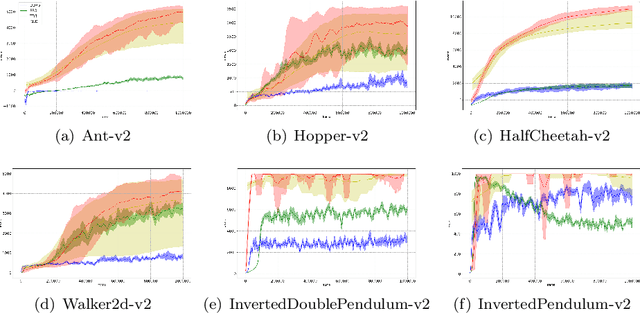

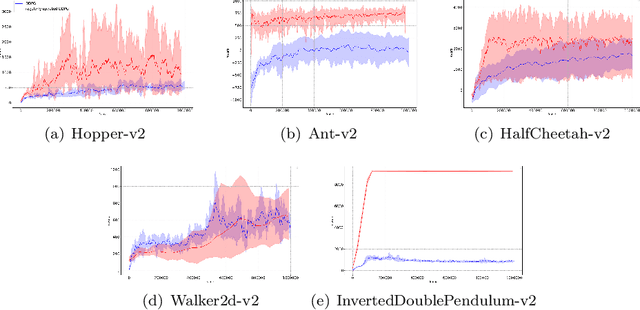

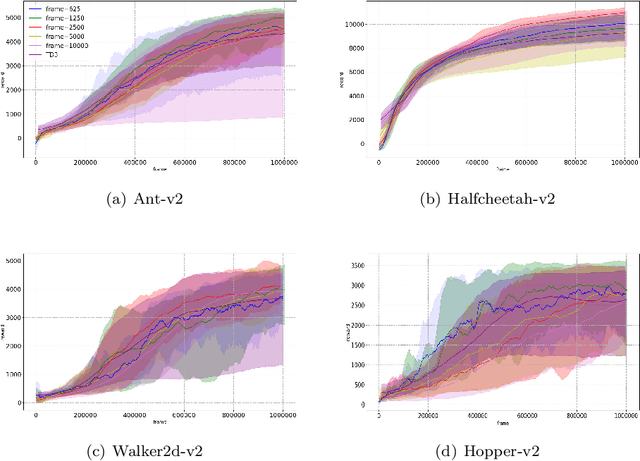

Deep Deterministic Policy Gradient (DDPG) algorithm is one of the most well-known reinforcement learning methods. However, this method is inefficient and unstable in practical applications. On the other hand, the bias and variance of the Q estimation in the target function are sometimes difficult to control. This paper proposes a Regularly Updated Deterministic (RUD) policy gradient algorithm for these problems. This paper theoretically proves that the learning procedure with RUD can make better use of new data in replay buffer than the traditional procedure. In addition, the low variance of the Q value in RUD is more suitable for the current Clipped Double Q-learning strategy. This paper has designed a comparison experiment against previous methods, an ablation experiment with the original DDPG, and other analytical experiments in Mujoco environments. The experimental results demonstrate the effectiveness and superiority of RUD.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge